Engineering researchers at the University of Minnesota Twin Cities have demonstrated a state-of-the-art hardware device that could reduce energy consumption for artificial intelligent (AI) computing applications by a factor of at least 1,000.

Tag: Machine Learning

How Machine Learning Is Propelling Structural Biology

Cell biologist embraces new tools to study human development on the smallest scale

Advanced Deep Learning and UAV Imagery Boost Precision Agriculture for Future Food Security

A research team investigated the efficacy of AlexNet, an advanced Convolutional Neural Network (CNN) variant, for automatic crop classification using high-resolution aerial imagery from UAVs.

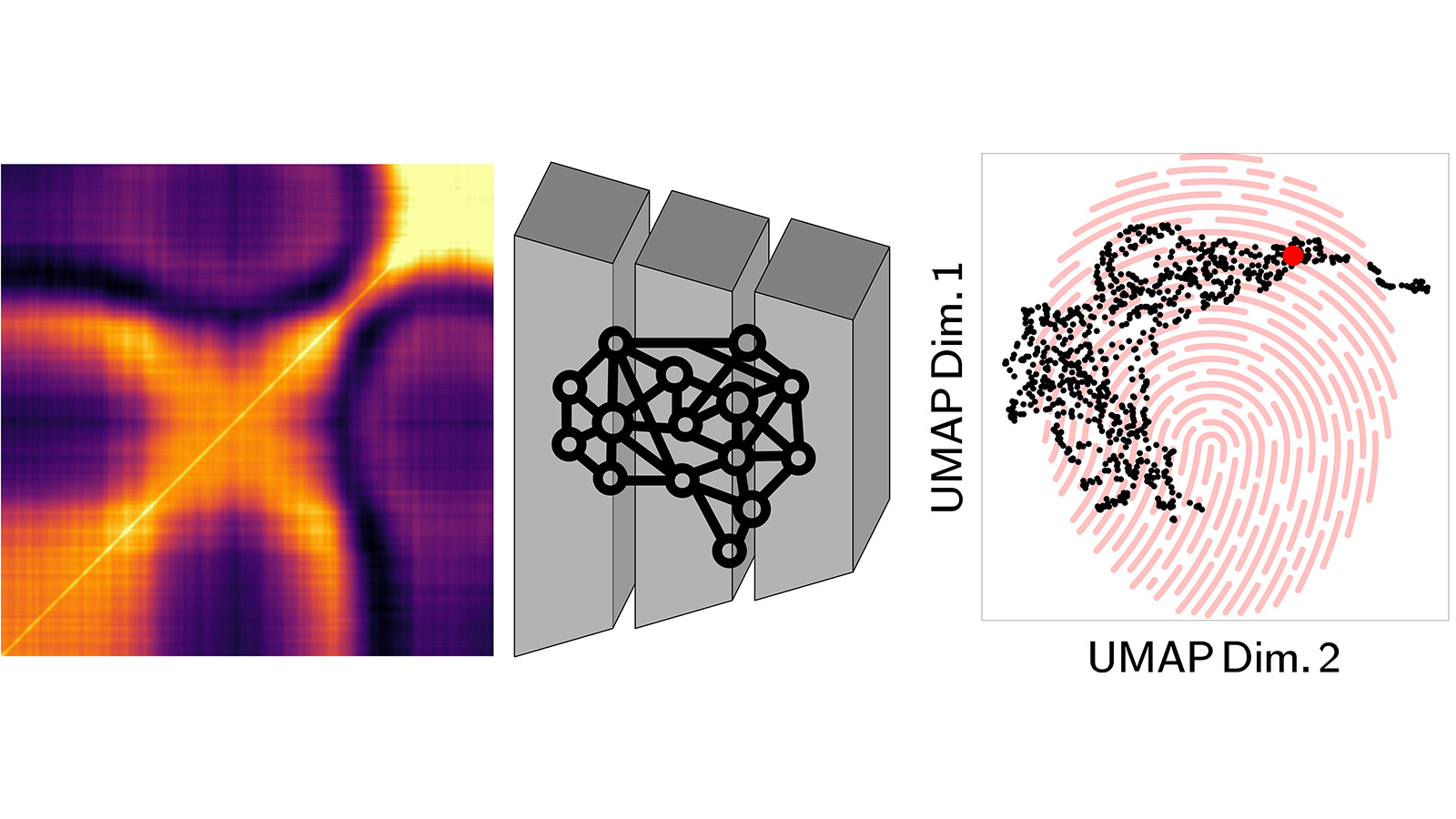

Scientists develop new artificial intelligence method to create material ‘fingerprints’

Researchers at the Advanced Photon Source and Center for Nanoscale Materials of the U.S. Department of Energy’s Argonne National Laboratory have developed a new technique that pairs artificial intelligence and X-ray science.

Predictive models for photosynthetic active radiation irradiance in temperate climates

Abstract This research evaluated 10 different empirical models designed for predicting Photosynthetically Active Radiation (PAR) at higher latitudes, addressing atmospheric conditions specific to these regions. The research introduces the Musleh-Rahman (MR) model, which substitutes Diffuse Horziontal Irradiance (DHI) with Clear…

Study: Algorithms Used by Universities to Predict Student Success May Be Racially Biased

Predictive algorithms commonly used by colleges and universities to determine whether students will be successful may be racially biased against Black and Hispanic students, according to new research published today in AERA Open, a peer-reviewed journal of the American Educational Research Association.

Researchers develop an AI model that predicts Continuous Renal Replacement Therapy survival

A UCLA-led team has developed a machine-learning model that can predict with a high degree of accuracy the short-term survival of dialysis patients on Continuous Renal Replacement Therapy (CRRT).

Machine learning could aid efforts to answer long-standing astrophysical questions

PPPL physicists have developed a computer program incorporating machine learning that could help identify blobs of plasma in outer space known as plasmoids. In a novel twist, the program has been trained using simulated data.

New Radiative Transfer Modeling Framework Enhances Deep Learning for Plant Phenotyping

A research team has developed a radiative transfer modeling framework using Helios 3D plant modeling software to simulate RGB, multi-/hyperspectral, thermal, and depth camera images with fully resolved reference labels.

American College of Radiology Launches First Medical Practice Artificial Intelligence Quality Assurance Program

The American College of Radiology® (ACR®) today launched the ACR Recognized Center for Healthcare-AI (ARCH-AI), the first national artificial intelligence quality assurance program for radiology facilities. The program outlines building blocks of infrastructure, processes and governance in AI implementation in real-world practice.

Balancing Act: Novel Wearable Sensors and AI Transform Balance Assessment

Traditional methods to assess balance often suffer from subjectivity, aren’t comprehensive enough and can’t be administered remotely. They also are expensive and require specialized equipment and clinical expertise.

Easter Island’s ‘population crash’ never occurred, new research reveals

A detailed new analysis of Easter Island’s rock gardens by a research team including faculty at Binghamton University, State University of New York shows that a hypothetical “population crash” never occurred on the island.

How can AI cope with changing categories?

Bar-Ilan University researchers have uncovered a new universal law detailing how artificial neural networks handle an increasing number of categories for identification. This law demonstrates how the identification error rate of such networks increases with the number of required recognizable objects.

The role of institutions in early-stage entrepreneurship: An explainable artificial intelligence approach

Abstract Although the importance of institutional conditions in fostering entrepreneurship is well established, less is known about the dominance of institutional dimensions, their predictive ability, and more complex non-linear relationships. To overcome the limitations of traditional regression approaches in addressing…

Researchers harness AI for autonomous discovery and optimization of materials

Today, researchers are developing ways to accelerate discovery by combining automated experiments, artificial intelligence and high-performance computing. A novel tool developed at Oak Ridge National Laboratory that leverages those technologies has demonstrated that AI can influence materials synthesis and conduct associated experiments without human supervision.

Groundbreaking LLNL and BridgeBio Oncology Therapeutics collaboration announces start of human trials for supercomputing-discovered cancer drug

In a substantial milestone for supercomputing-aided drug design, Lawrence Livermore National Laboratory (LLNL) and BridgeBio Oncology Therapeutics (BridgeBio) today announced clinical trials have begun for a first-in-class medication that targets specific genetic mutations implicated in many types of cancer.

Mount Sinai Health System named 2024 Hearst Health Prize winner

Hearst Health and the UCLA Center for SMART Health awarded the 2024 Hearst Health Prize to Mount Sinai Health System. Mount Sinai Health System was declared the winner for a machine learning application called NutriScan AI that facilitates faster identification and treatment of malnutrition in hospitalized patients.

LJI scientists develop new method to match genes to their molecular ‘switches’

LA JOLLA, CA—Scientists at La Jolla Institute for Immunology (LJI) have developed a new computational method for linking molecular marks on our DNA to gene activity. Their work may help researchers connect genes to the molecular “switches” that turn them on or off. This research, published in Genome Biology, is an important step toward harnessing machine learning approaches to better understand links between gene expression and disease development.

Mapping soil health: new index enhances soil organic carbon prediction

A cutting-edge machine learning model has been developed to predict soil organic carbon (SOC) levels, a critical factor for soil health and crop productivity. The innovative approach utilizes hyperspectral data to identify key spectral bands, offering a more precise and efficient method for assessing soil quality and supporting sustainable agricultural practices.

Aurora supercomputer heralds a new era of scientific innovation

Argonne’s Aurora supercomputer represents a leap forward in scientific research. Offering unprecedented speed and power, advanced hardware, and AI capabilities, Aurora ushers in a new era of supercomputing to revolutionize the way scientists conduct research and achieve breakthroughs.

Brave new virtual world fast becoming a reality in the mining sector

A virtual and robotic revolution in Australia’s mining industry could spell the end of fly-in-fly-out (FIFO) workers within a decade, according to one of the country’s leading geologists and immersive technology experts.

AI poised to usher in new level of concierge services to the public

Concierge services built on artificial intelligence have the potential to improve how hotels and other service businesses interact with customers, a new paper suggests.

Can you spot a deepfake?

University of South Australia computer scientist and AI expert Associate Professor Wolfgang Mayer demonstrates in this video how AI is getting closer to replicating voices and faces, and soon it will be very hard to tell the difference between deepfakes and reality.

Using artificial intelligence to speed up and improve the most computationally-intensive aspects of plasma physics in fusion

Researchers at the Department of Energy’s (DOE) Princeton Plasma Physics Laboratory (PPPL) are using artificial intelligence to perfect the design of the vessels surrounding the super-hot plasma, optimize heating methods and maintain stable control of the reaction for increasingly long periods.

Women’s Health Month: Artificial Intelligence Can Improve OB-GYN Care

Cedars-Sinai investigators are using artificial intelligence (AI) to reduce serious health risks associated with pregnancy and childbirth and improve screening for some gynecological cancers.

Rutgers-Led Statewide Translational Research Institute Is Awarded $39.7 Million National Institutes of Health Grant

The National Institutes of Health (NIH) has awarded the Rutgers Institute for Translational Medicine and Science $39,673,786 over seven years to build and improve upon infrastructure that promotes clinical and translational science through the New Jersey Alliance for Clinical and Translational Science (NJ ACTS).

Study led by ORNL informs climate resilience strategies in urban, rural areas

Local decision-makers looking for ways to reduce the impact of heat waves on their communities have a valuable new capability at their disposal: a new study on vegetation resilience.

Research to Prevent Blindness Opens Applications for Vision Research Grants

Research to Prevent Blindness is pleased to announce that it has opened a new round of grant funding for high-impact vision research, including research related to glaucoma, age-related macular degeneration, inherited retinal diseases, myopia, amblyopia, low vision and many more.

Researchers offer US roadmap to close the carbon cycle

Scientists at Oak Ridge National Laboratory and six other Department of Energy national laboratories have developed a United States-based perspective for achieving net-zero carbon emissions. The roadmap was recently published in the journal Nature Reviews Chemistry.

Machine learning tool identifies rare, undiagnosed immune disorders through patients’ electronic health records

Researchers say a machine learning tool can identify many patients with rare, undiagnosed diseases years earlier, potentially improving outcomes and reducing cost and morbidity. The findings, led by researchers at UCLA Health, are described in Science Translational Medicine.

Wake Forest University School of Medicine Approved for $1 Million in PCORI Funding for Patient Subgroup Discovery Study

Researchers at Wake Forest University School of Medicine have been approved for a $1 million award by the Patient-Centered Outcomes Research Institute (PCORI) for a methodology study.

Andrew Smith, MD, PhD, named chair of Diagnostic Imaging at St. Jude Children’s Research Hospital

Smith is a nationally recognized academic radiologist with expertise in body and oncologic imaging, clinical trials and imaging research and the application of artificial intelligence (AI) in imaging and medicine.

How Scientists Are Accelerating Chemistry Discoveries With Automation

Researchers have developed an automated workflow that could accelerate the discovery of new pharmaceutical drugs and other useful products. The new approach could enable real-time reaction analysis and identify new chemical-reaction products much faster than current laboratory methods.

Moffitt Cancer Center to Revolutionize Cancer Care Delivery Using AI and Machine Learning with NVIDIA, Oracle and Deloitte

Moffitt Cancer Center announced today a collaboration with NVIDIA, Oracle and Deloitte* on an initiative aimed at revolutionizing cancer care delivery through advanced artificial intelligence and machine learning technologies.

Q&A: How to train AI when you don’t have enough data

As researchers explore potential applications for AI, they have found scenarios where AI could be really useful but there’s not enough data to accurately train the algorithms. Jenq-Neng Hwang, University of Washington professor of electrical and computer and engineering, specializes in these issues.

The Time Is Now for Artificial Intelligence, Machine Learning

From artificial intelligence (AI) and data integration to natural language processing and statistics, the Cedars-Sinai Department of Computational Biomedicine is utilizing the latest technological advances to find solutions to some of the most complex healthcare issues.

Domain knowledge drives data-driven artificial intelligence in well logging

In well logging interpretation, researchers incorporate logging response functions that incorporate domain knowledge into the loss function of data-driven machine learning models, which are used to constrain model outputs.

Study Estimates Nearly 70 Percent of Children Under Six in Chicago May Be Exposed to Lead-Contaminated Tap Water

A new analysis led by researchers at the Johns Hopkins Bloomberg School of Public Health estimates that 68 percent of Chicago children under age six live in households with tap water containing detectable levels of lead.

Revolutionizing Carbon Neutrality: Machine Learning Paves the Way for Advanced CO2 Reduction Catalysts

A perspective highlights the transformative impact of machine learning (ML) on enhancing carbon dioxide reduction reactions (CO2RR), steering us closer to carbon neutrality.

SMU Chemist and Colleagues Develop Machine Learning Model for Atomic-level Interactions

Machine learning interatomic potentials (MLIP)s have become an efficient and less expensive alternative to traditional quantum chemical simulations.

Machine learning algorithm identifies individuals who experience the largest reduction in depression risk from Medicaid coverage

Previous research has demonstrated that Medicaid coverage reduces the risk for developing depression among recipients, but the question is who benefits most from coverage. Using a tool called machine learning causal forest to analyze data from the Oregon Health Insurance…

The role of machine learning and computer vision in Imageomics

A new field promises to usher in a new era of using machine learning and computer vision to tackle small and large-scale questions about the biology of organisms around the globe.

UTSW team’s new AI method may lead to ‘automated scientists’

UT Southwestern Medical Center researchers have developed an artificial intelligence (AI) method that writes its own algorithms and may one day operate as an “automated scientis” to extract the meaning behind complex datasets.

JMIR Neurotechnology Invites Submissions on Brain-Computer Interfaces (BCIs)

JMIR Publications is pleased to announce a new theme issue in JMIR Neurotechnology exploring brain-computer interfaces (BCIs) that represent the transformative convergence of neuroscience, engineering, and technology.

Widely used machine learning models reproduce dataset bias in Rice study

High-income communities overrepresented in relevant datasets for immunotherapy research.

Imageomics poised to enable new understanding of life

Imageomics, a new field of science, has made stunning progress in the past year and is on the verge of major discoveries about life on Earth, according to one of the founders of the discipline.

Tanya Berger-Wolf, faculty director of the Translational Data Analytics Institute at The Ohio State University, outlined the state of imageomics in a presentation at the annual meeting of the American Association for the Advancement of Science.

Shuffling the deck for privacy

By integrating an ensemble of privacy-preserving algorithms, a KAUST research team has developed a machine-learning approach that addresses a significant challenge in medical research: How to use the power of artificial intelligence (AI) to accelerate discovery from genomic data while protecting the privacy of individuals

Q&A: What is the best route to fairer AI systems?

Mike Teodorescu, a University of Washington assistant professor in the Information School, proposes that private enterprise standards for fairer machine learning systems would inform governmental regulation.

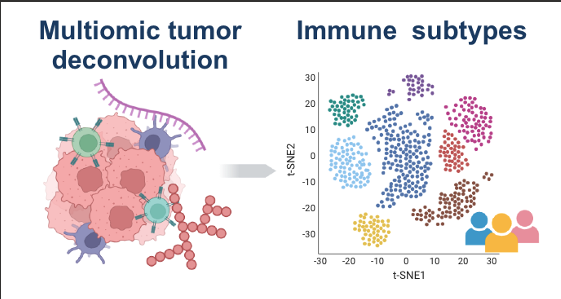

Researchers Characterize the Immune Landscape in Cancer

Researchers from the Icahn School of Medicine at Mount Sinai, in collaboration with the Clinical Proteomic Tumor Analysis Consortium of the National Institutes of Health, The University of Texas MD Anderson Cancer Center, Sylvester Comprehensive Cancer Center at the University of Miami Miller School of Medicine, and others, have unveiled a detailed understanding of immune responses in cancer, marking a significant development in the field. The findings were published in the February 14 online issue of Cell. Utilizing data from more than 1,000 tumors across 10 different cancers, the study is the first to integrate DNA, RNA, and proteomics (the study of proteins), revealing the complex interplay of immune cells in tumors. The data came from the Clinical Proteomic Tumor Analysis Consortium (CPTAC), a program under the National Cancer Institute.

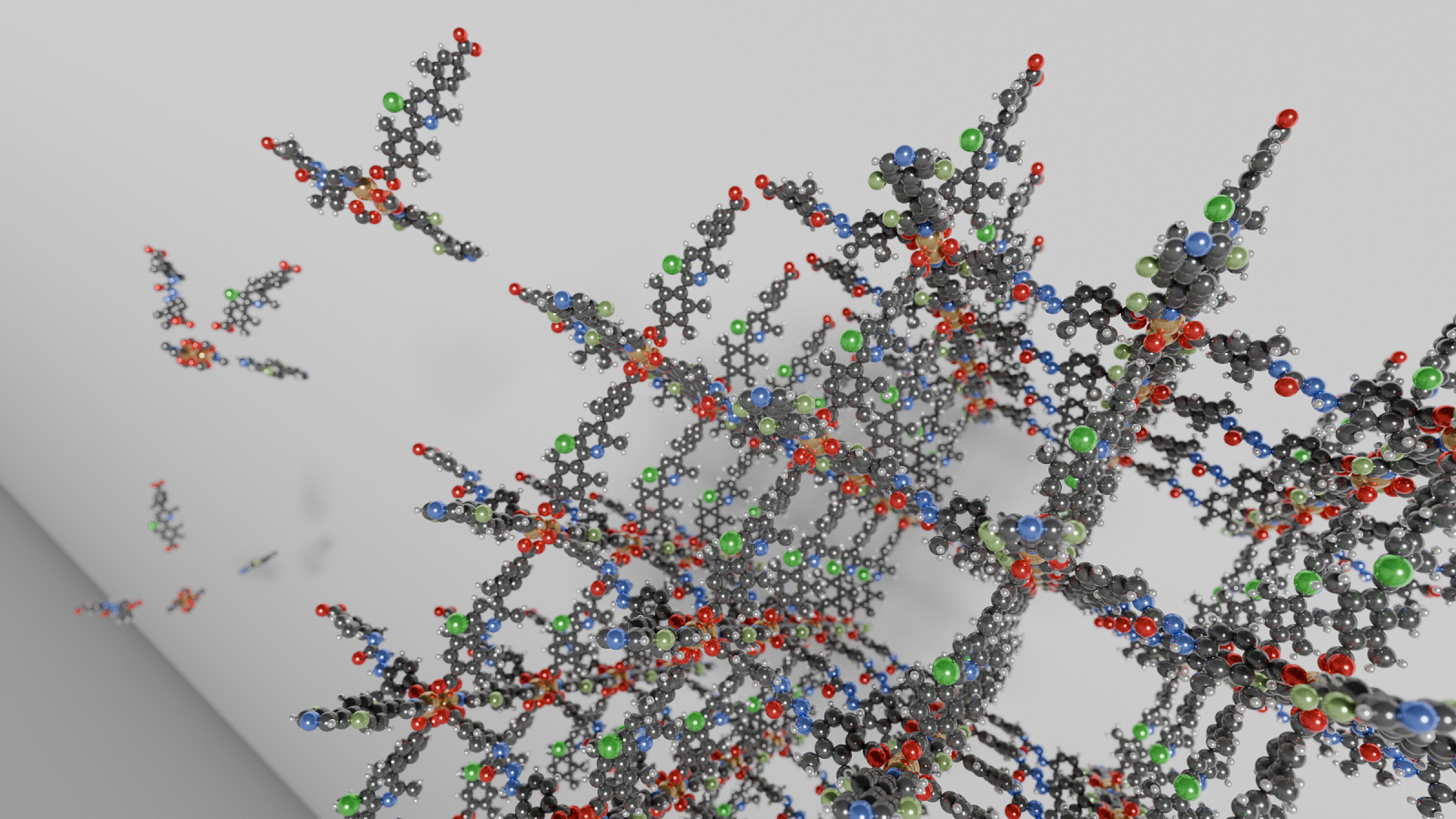

Argonne scientists use AI to identify new materials for carbon capture

Researchers at the U.S. Department of Energy’s Argonne National Laboratory have used new generative AI techniques to propose new metal-organic framework materials that could offer enhanced abilities to capture carbon