On March 11, PPPL opened its new Quantum Diamond Lab, a space devoted to studying and refining the processes involved in using plasma, the electrically charged fourth state of matter, to create high-quality diamond material for quantum information science applications.

Tag: Computing

Eco-labeling: self or certification?

Researchers use game theory to analyze the eco-label strategy selection of the manufacturer in green supply chain.

AI researchers expose critical vulnerabilities within major LLMs

Large Language Models (LLMs) such as ChatGPT and Bard have taken the world by storm this year, with companies investing millions to develop these AI tools, and some leading AI chatbots being valued in the billions.

Physicists demonstrate powerful physics phenomenon

In a new breakthrough, researchers have used a novel technique to confirm a previously undetected physics phenomenon that could be used to improve data storage in the next generation of computer devices.

Scientists uncovered mystery of important material for semiconductors at the surface

A team of scientists with Oak Ridge National Laboratory has investigated the behavior of hafnium oxide, or hafnia, because of its potential for use in novel semiconductor applications.

Discovering the unexpected way protected areas contribute to biodiversity

A study published in Nature found that, while protected areas in Southeast Asia were shown to be good for animals inside their borders, as expected, that protection also extended to nearby unprotected areas, which was a surprise.

How Scientists Are Accelerating Next-Gen Microelectronics

In a new Q&A, microelectronics expert and CHiPPS Director Ricardo Ruiz shares his perspective on keeping pace with Moore’s Law in the decades to come through a revolutionary technique called extreme ultraviolet lithography.

Rensselaer Polytechnic Institute Plans to Deploy First IBM Quantum System One on a University Campus

Today, it was announced that Rensselaer Polytechnic Institute will become the first university in the world to house an IBM Quantum System One. The IBM quantum computer, intended to be operational by January of 2024, will serve as the foundation of a new IBM Quantum Computational Center in partnership with Rensselaer Polytechnic Institute (RPI). By partnering, RPI’s vision is to greatly enhance the educational experiences and research capabilities of students and researchers at RPI and other institutions, propel the Capital Region into a top location for talent, and accelerate New York’s growth as a technology epicenter.

Novel algorithm improves understanding of plasma shock waves in space

Scientists have used a recently developed technique to improve predictions of the timing and intensity of the solar wind’s strikes, which sometimes disrupt telecommunications satellites and damage electrical grids.

Jefferson Lab Hosts International Computing in High Energy and Nuclear Physics Conference

Experts in high-performance computing and data management are gathering in Norfolk next week for the 26th International Conference on Computing in High Energy and Nuclear Physics (CHEP2023). Held approximately every 18 months, this high-impact conference will be held at the Norfolk Marriott Waterside in Norfolk, Va., May 8-12. CHEP2023 is hosted by the U.S. Department of Energy’s Thomas Jefferson National Accelerator Facility in nearby Newport News, Va. This is the first in-person CHEP conference to be held since 2019.

Five Ways QSA is Advancing Quantum Computing

The Quantum Systems Accelerator has issued an impact report that details progress made since the center launched in 2020. Highlights include a record-setting quantum sensor that could be used to hunt dark matter, a machine learning algorithm to correct qubit errors in real time, and the first observation of several exotic states of matter using a 256-atom quantum device.

Q&A: How to make computing more sustainable

SLAC researcher Sadasivan Shankar talks about a new environmental effort starting at the lab – building a roadmap that will help researchers improve the energy efficiency of computing, from devices like cellphones to artificial intelligence.

Expert Available on Artificial Intelligence, ChatGPT

Rensselaer Polytechnic Institute’s James Hendler is the Director of the Future of Computing Institute; Tetherless World Professor of Computer, Web and Cognitive Sciences; and Director of the RPI-IBM Artificial Intelligence Research Collaboration. “In a nutshell, ChatGPT creates something not dissimilar…

Scientists develop novel method to explore plant-microbe interactions

DOE funding allows researchers to gain closer look into plant-microbe symbioses.

PPPL launches project to build the Princeton Plasma Innovation Center

PPPL moved forward with plans to build the Princeton Plasma Innovation Center (PPIC), a new state-of-the-art office and laboratory building and the first new building on campus in 50 years. The project kicked off during a meeting with architects on July 8.

Washington State Academy of Sciences Adds Six PNNL Researchers

The Washington State Academy of Sciences added six people from PNNL to its 2022 class of inductees.

Department of Energy Announces $78 Million for Research in High Energy Physics

Today, the U.S. Department of Energy (DOE) announced $78 million in funding for 58 research projects that will spur new discoveries in high energy physics. The projects—housed at 44 colleges and universities across 22 states—are exploring the fundamental science about the universe that also underlies technological advancements in medicine, computing, energy technologies, manufacturing, national security, and more.

Flawed AI Makes Robots Racist, Sexist

The work, led by Johns Hopkins University, the Georgia Institute of Technology, and University of Washington researchers, is believed to be the first to show that robots loaded with an accepted and widely used model operate with significant gender and racial biases. The work is set to be presented and published this week at the 2022 Conference on Fairness, Accountability, and Transparency.

Build-a-satellite program could fast track national security space missions

Valhalla, a Python-based performance modeling framework developed at Sandia National Laboratories, uses high-performance computing to build preliminary satellite designs based on mission requirements and then runs those designs through thousands of simulations.

Adelaide at the centre of next generation AI research

A new research centre that focuses on next-generation artificial intelligence (AI) technology will develop the high-calibre expertise Australia needs to compete in the coming machine learning-enabled global economy.

U.S. Department of Energy to Showcase National Lab Expertise at SC21

The scientific computing and networking leadership of the U.S. Department of Energy’s (DOE’s) national laboratories will be on display at SC21, the International Conference for High-Performance Computing, Networking, Storage and Analysis. The conference takes place Nov. 14-19 in St. Louis via a combination of on-site and online resources.

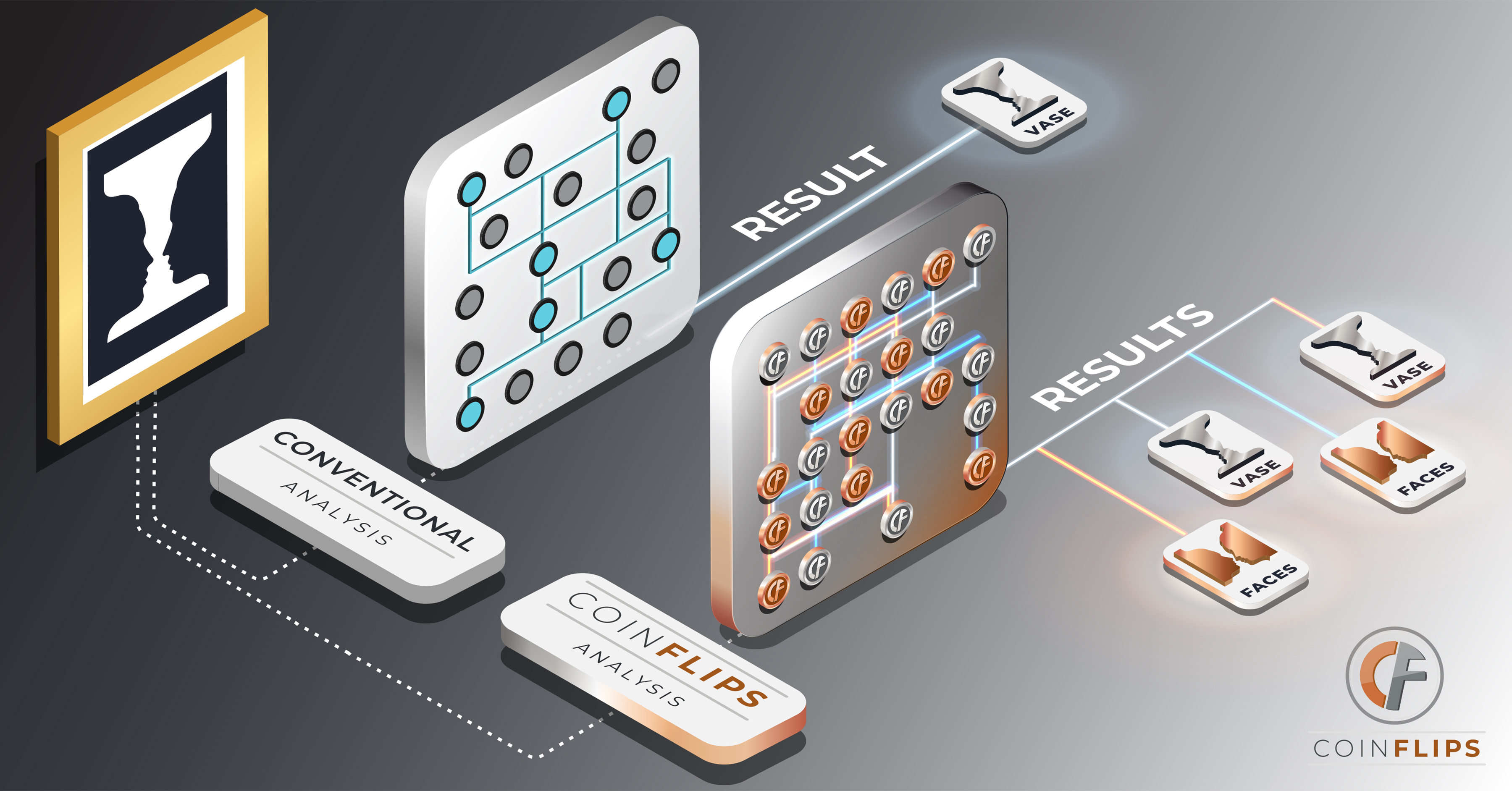

What if the secret to your brain’s elusive computing power is its randomness?

Scientists at Sandia National Laboratories are creating a concept for a new kind of computer for solving complex probability problems that involve random chance.

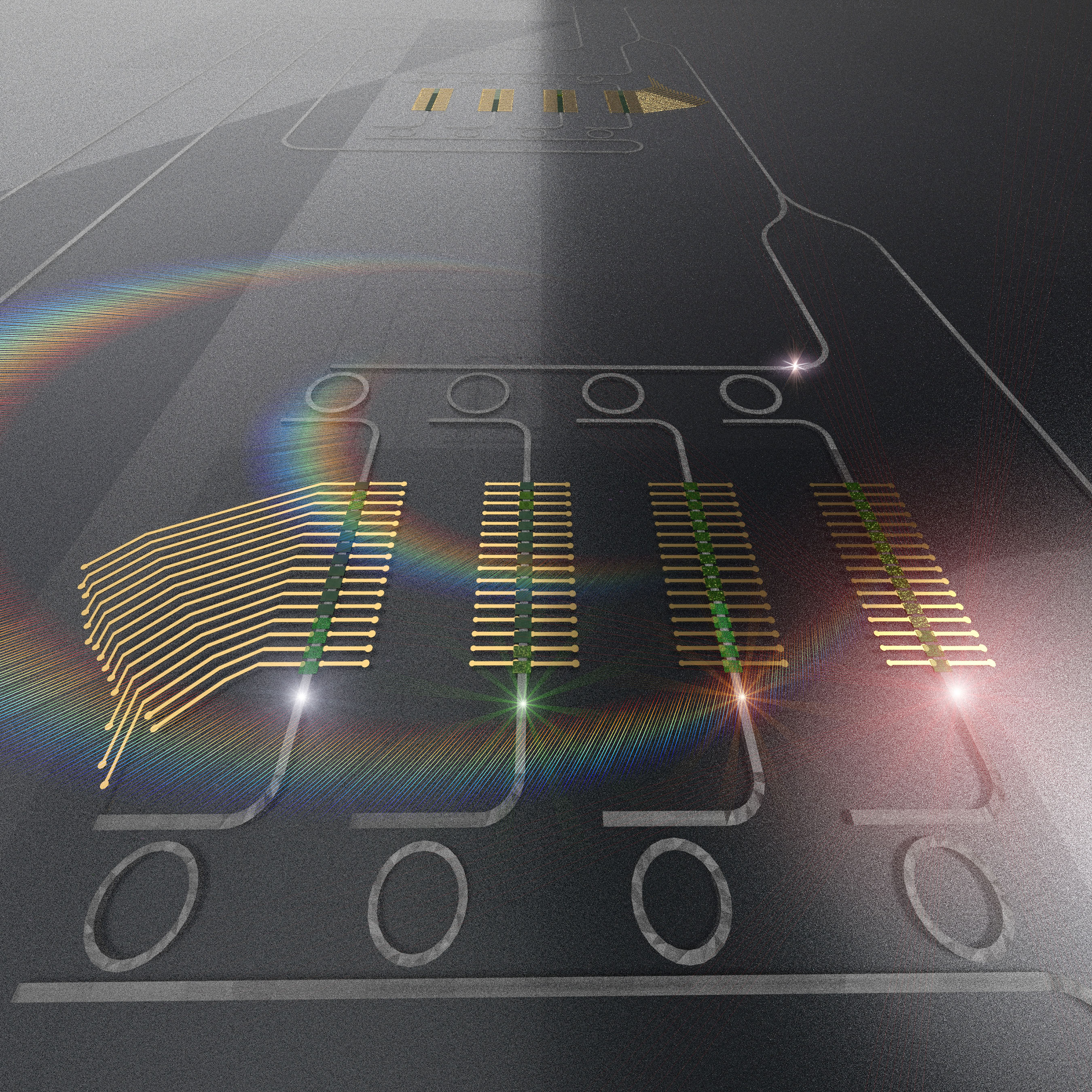

Researchers Develop Novel Analog Processor for High Performance Computing

Analog photonic solutions offer unique opportunities to address complex computational tasks with unprecedented performance in terms of energy dissipation and speeds, overcoming current limitations of modern computing architectures based on electron flows and digital approaches. In a new study published…

Cross-pollinating physicists use novel technique to improve the design of facilities that aim to harvest fusion energy

Scientists at PPPL have transferred a technique from one realm of plasma physics to another to enable the more efficient design of powerful magnets for doughnut-shaped fusion facilities known as tokamaks.

Department of Energy Announces $15.1 Million for Integrated Computational and Data Infrastructure for Science Research

The U.S. Department of Energy (DOE) announced $15.1 million for three collaborative research projects, at five universities, to advance the development of a flexible multi-tiered data and computational infrastructure to support a diverse collection of on-demand scientific data processing tasks and computationally intensive simulations.

Main Attraction: Scientists Create World’s Thinnest Magnet

Scientists at Berkeley Lab and UC Berkeley have created an ultrathin magnet that operates at room temperature. The ultrathin magnet could lead to new applications in computing and electronics – such as spintronic memory devices – and new tools for the study of quantum physics.

Scientists take first snapshots of ultrafast switching in a quantum electronic device

Scientist demonstrated a new way of observing atoms as they move in a tiny quantum electronic switch as it operates. Along the way, they discovered a new material state that could pave the way for faster, more energy-efficient computing.

Coalition’s new leadership renews focus on advocating for academic scientific computation

Computers play an integral role in nearly every discipline of research today, giving scientists the ability to discover new drugs, develop new materials, forecast the impacts of climate change, and solve some of today’s most challenging problems.

Quantum material’s subtle spin behavior proves theoretical predictions

Using complementary computing calculations and neutron scattering techniques, researchers from the Department of Energy’s Oak Ridge and Lawrence Berkeley national laboratories and the University of California, Berkeley, discovered the existence of an elusive type of spin dynamics in a quantum mechanical system.

Coalition’s new leadership renews focus on advocating for academic scientific computation

The Coalition for Academic Scientific Computation, or CASC — a network of more than 90 research computing-focused organizations from academic institutions, national labs, and research centers around the U.S. — hopes to continue to bring this critical computing work to the forefront through its activities. Newly elected leadership and recent changes within CASC are helping the nonprofit organization excel in this mission.

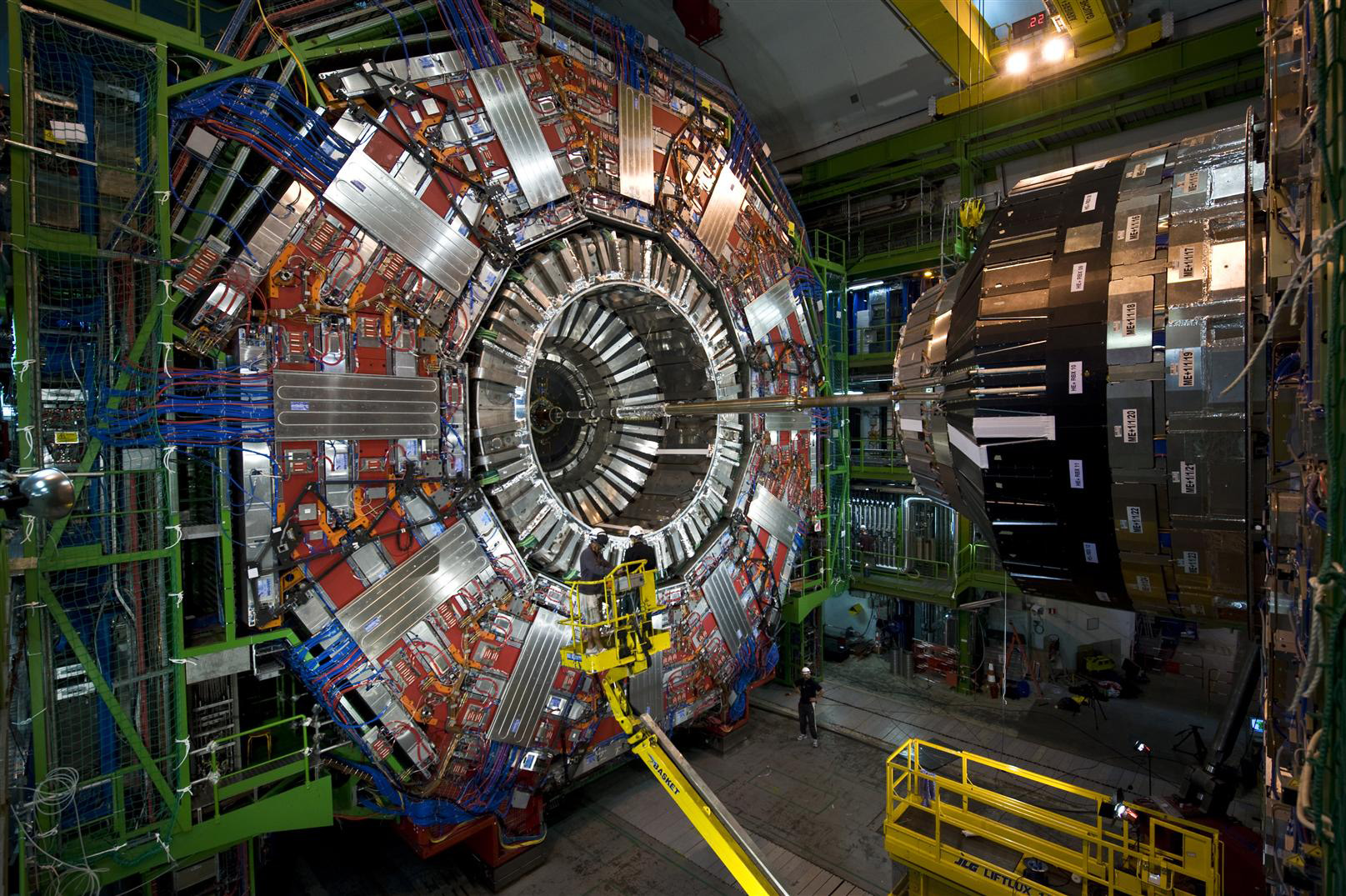

Coffea speeds up particle physics data analysis

The prodigious amount of data produced at the Large Hadron Collider presents a major challenge for data analysis. Coffea, a Python package developed by Fermilab researchers, speeds up computation and helps scientists work more efficiently. Around a dozen international LHC research groups now use Coffea, which draws on big data techniques used outside physics.

US Air Force, ORNL launch next-generation global weather forecasting system

The U.S. Air Force and the Department of Energy’s Oak Ridge National Laboratory launched a new high-performance weather forecasting computer system that will provide a platform for some of the most advanced weather modeling in the world.

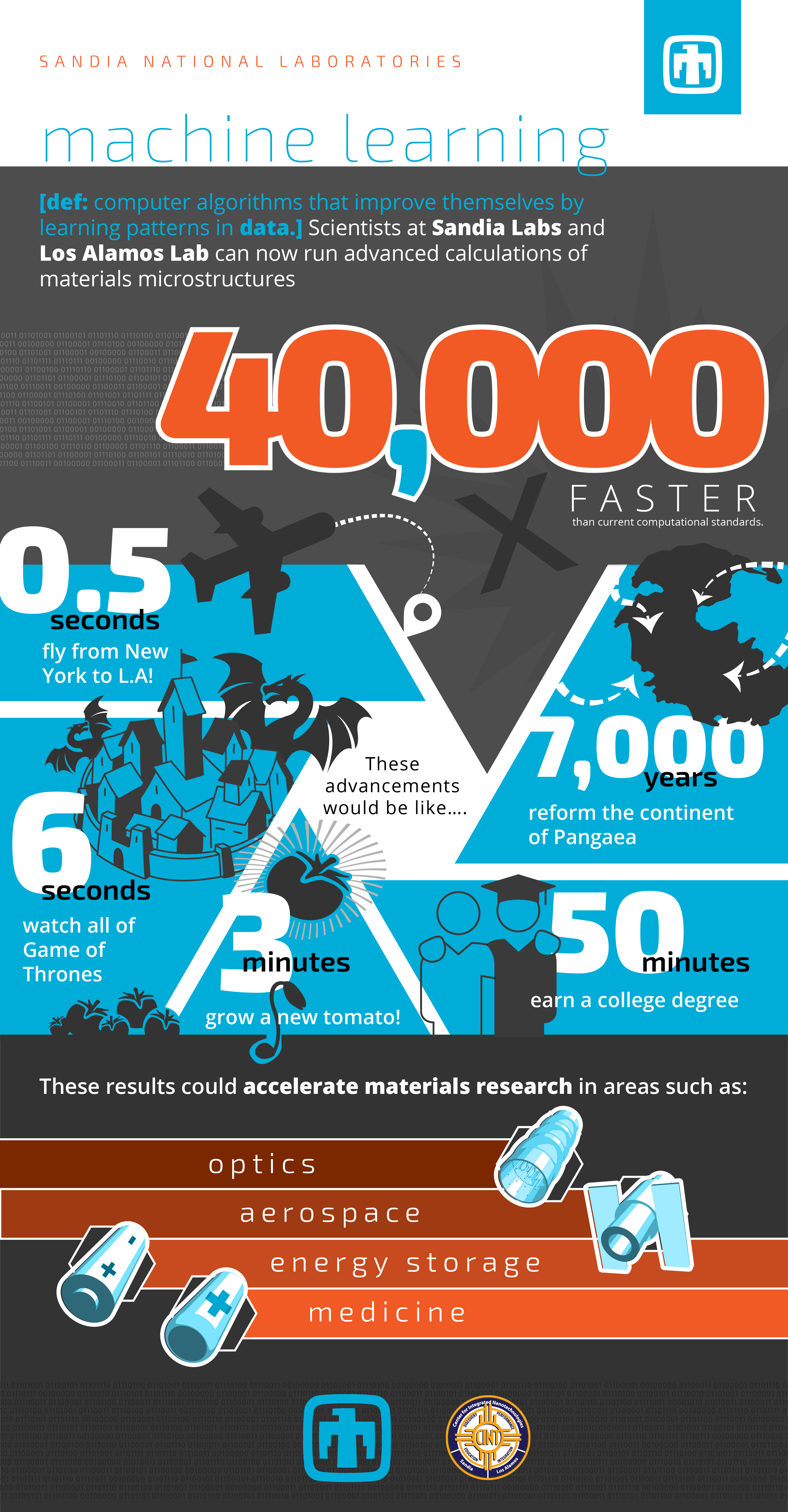

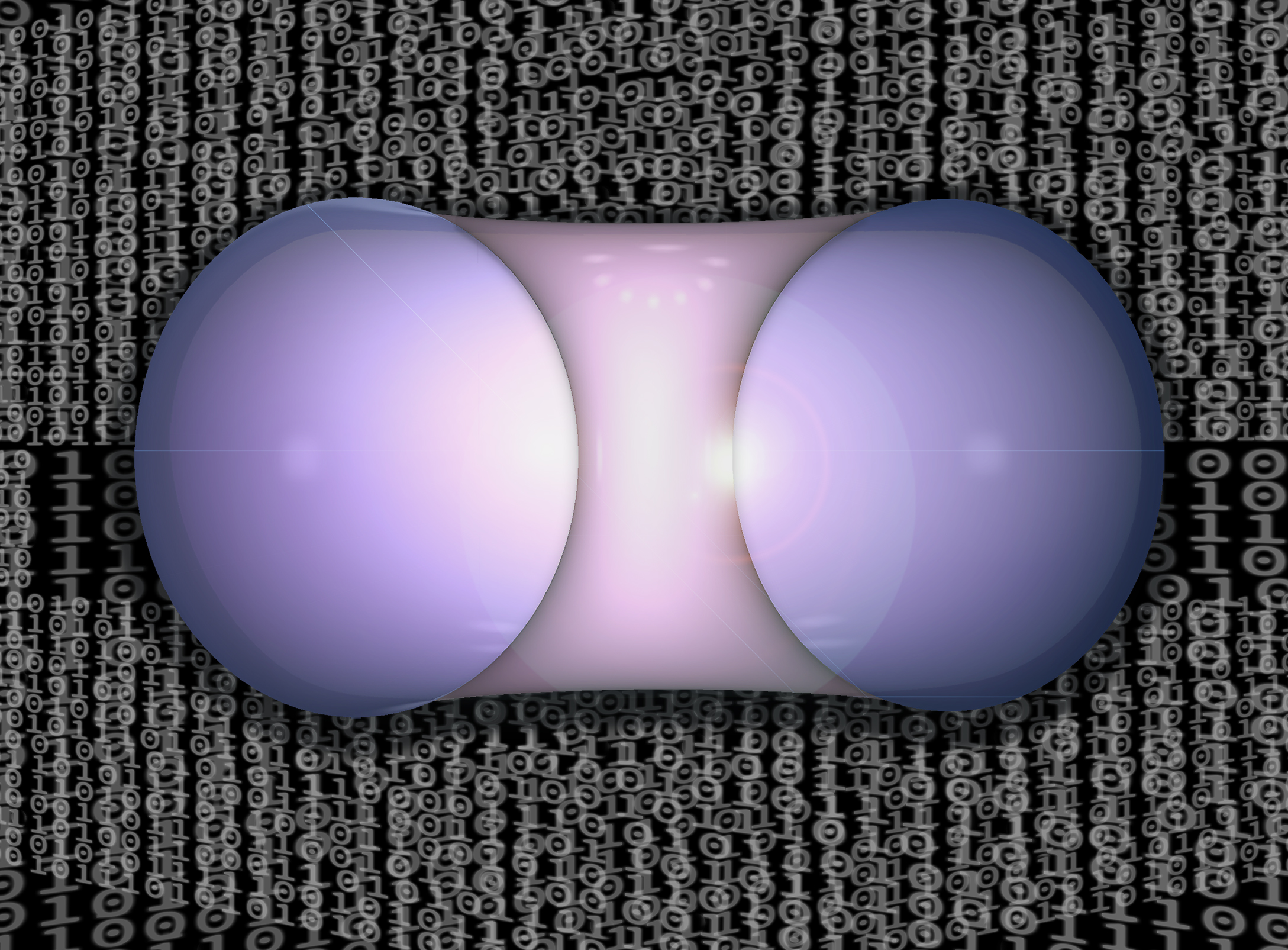

Advanced materials in a snap

A research team at Sandia National Laboratories has successfully used machine learning — computer algorithms that improve themselves by learning patterns in data — to complete cumbersome materials science calculations more than 40,000 times faster than normal.

Nikhil Tiwale: Practicing the Art of Nanofabrication

Applying his passions for science and art, Nikhil Tiwale—a postdoc at Brookhaven Lab’s Center for Functional Nanomaterials—is fabricating new microelectronics components.

Four Rutgers Professors Named AAAS Fellows

Four Rutgers professors have been named fellows of the American Association for the Advancement of Science (AAAS), an honor given to AAAS members by their peers. They join 485 other new AAAS fellows as a result of their scientifically or socially distinguished efforts to advance science or its applications. A virtual induction ceremony is scheduled for Feb. 13, 2021.

November 9, 2020 Web Feature PNNL Researchers Speed Power Grid Simulations Using AI

PNNL’s new Smart Power Grid Simulator, or Smart-PGsim, combines high-performance computing and artificial intelligence to optimize power grid simulations without sacrificing accuracy.

Globus Moves 1 Exabyte

Globus, a leading research data management service, reached a huge milestone by breaking the exabyte barrier. While it took over 2,000 days for the service to transfer the first 200 petabytes (PB) of data, the last 200PB were moved in just 247 days. This rapidly accelerating growth is reflected by the more than 150,000 registered users who have now transferred over 120 billion files using Globus.

Fermilab scientists selected as APS fellows

Three Fermilab scientists have been selected 2020 fellows of the American Physical Society, a distinction awarded each year to no more than one-half of 1 percent of current APS members by their peers.

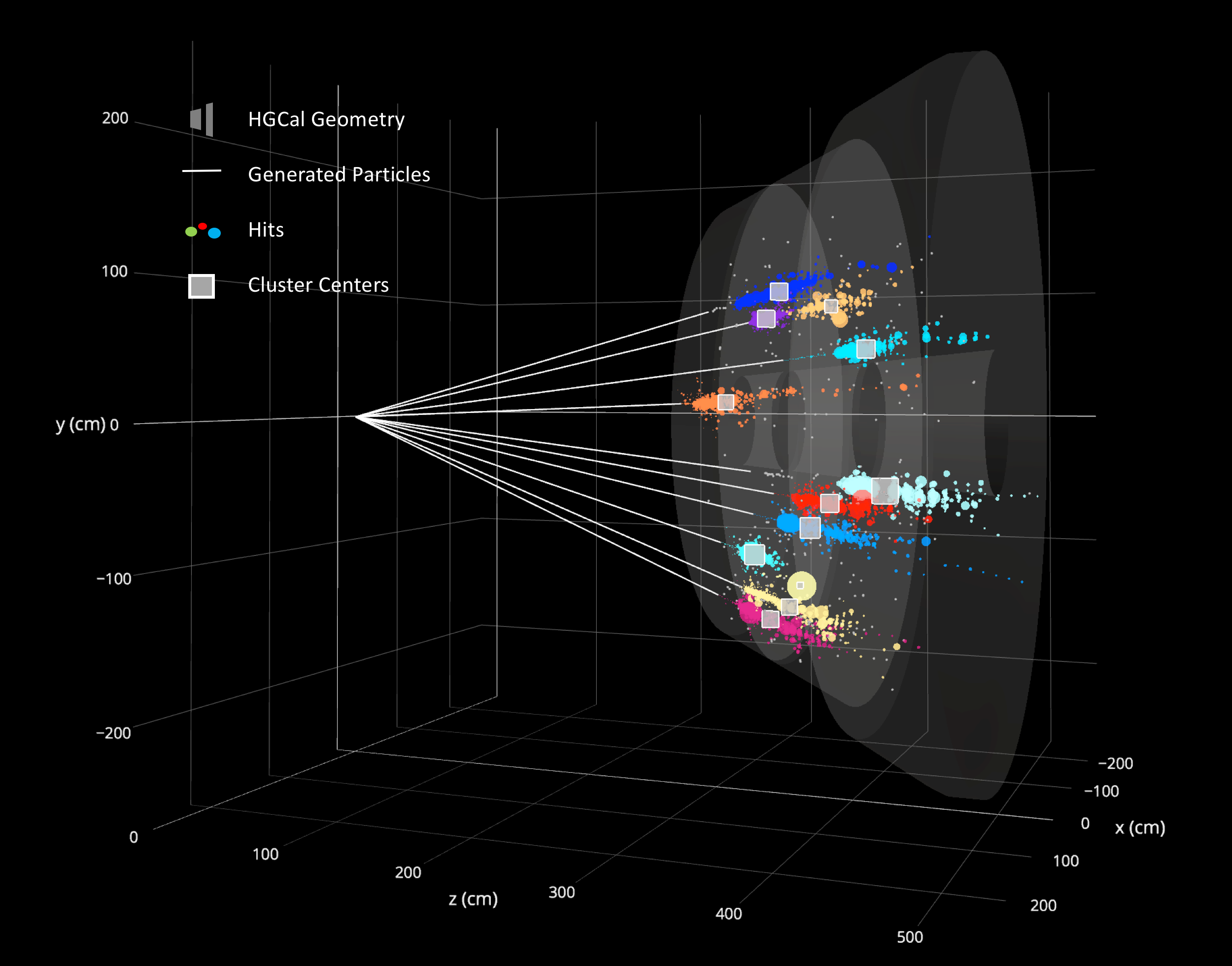

The next big thing: the use of graph neural networks to discover particles

Fermilab scientists have implemented a cloud-based machine learning framework to handle data from the CMS experiment at the Large Hadron Collider. Now they can begin to use graph neural networks to boost their pattern recognition abilities in the search for new particles.

Scientists develop forecasting technique that could help advance quest for fusion energy

An international group of researchers has developed a technique that forecasts how tokamaks might respond to unwanted magnetic errors. These forecasts could help engineers design fusion facilities that create a virtually inexhaustible supply of safe and clean fusion energy to generate electricity.

Design facility pushes innovation boundaries

A new design facility, located at the University of Adelaide, will offer organisations and individuals a space in which to push the boundaries of technical, industrial and business innovation.

White House Office of Technology Policy, National Science Foundation and Department of Energy Announce Over $1 Billion in Awards for Artificial Intelligence and Quantum Information Science Research Institutes

Today, the White House Office of Science and Technology Policy, the National Science Foundation (NSF), and the U.S. Department of Energy (DOE) announced over $1 billion in awards for the establishment of 12 new artificial intelligence (AI) and quantum information science (QIS) research institutes nationwide.

Revised code could help improve efficiency of fusion experiments

Researchers led by PPPL have upgraded a key computer code for calculating forces acting on magnetically confined plasma in fusion energy experiments. The upgrade will help scientists further improve the design of breakfast-cruller-shaped facilities known as stellarators.

Calculating the Benefits of Exascale and Quantum Computers

The Department of Energy is supporting the development of both conventional exascale supercomputers and quantum computers. Each provide benefits that could transform scientific research.

Photon-Based Processing Units Enable More Complex Machine Learning

Machine learning performed by neural networks is a popular approach to developing artificial intelligence, as researchers aim to replicate brain functionalities for a variety of applications. A paper in the journal Applied Physics Reviews proposes a new approach to perform computations required by a neural network, using light instead of electricity. In this approach, a photonic tensor core performs multiplications of matrices in parallel, improving speed and efficiency of current deep learning paradigms.

Preparing for exascale: LLNL breaks ground on computing facility upgrades

To meet the needs of tomorrow’s supercomputers, the National Nuclear Security Administration’s (NNSA’s) Lawrence Livermore National Laboratory (LLNL) has broken ground on its Exascale Computing Facility Modernization (ECFM) project, which will substantially upgrade the mechanical and electrical capabilities of the Livermore Computing Center.

Meet the Intern Using Quantum Computing to Study the Early Universe

During an internship at Brookhaven National Laboratory, Juliette Stecenko is using modern supercomputers and quantum computing platforms to perform astronomy simulations that may help us better understand where we came from.

DUNE prepares for data onslaught

The Deep Underground Neutrino Experiment will collect massive amounts of data from star-born and terrestrial neutrinos. A worldwide network of computers will provide the infrastructure to help analyze it. Using artificial intelligence and machine learning, scientists write software to mine the data.

Fighting COVID with Computing

Fermilab, Brookhaven, and Open Science Grid dedicate computational power to COVID-19 research.

Fighting COVID with computing: Fermilab, Brookhaven, Open Science Grid dedicate computational power to COVID-19 research

Scientists and engineers at Fermilab and Brookhaven are uniting with other organizations in the Open Science Grid to help fight COVID-19 by dedicating considerable computational power to researchers studying how they can help combat the virus-borne disease.