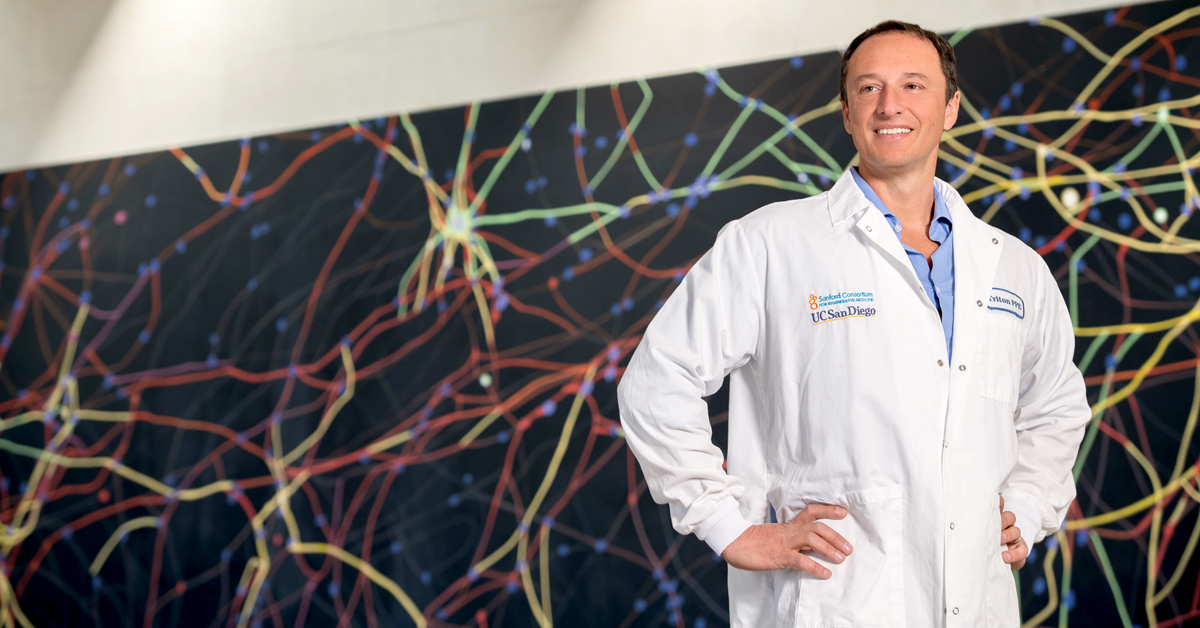

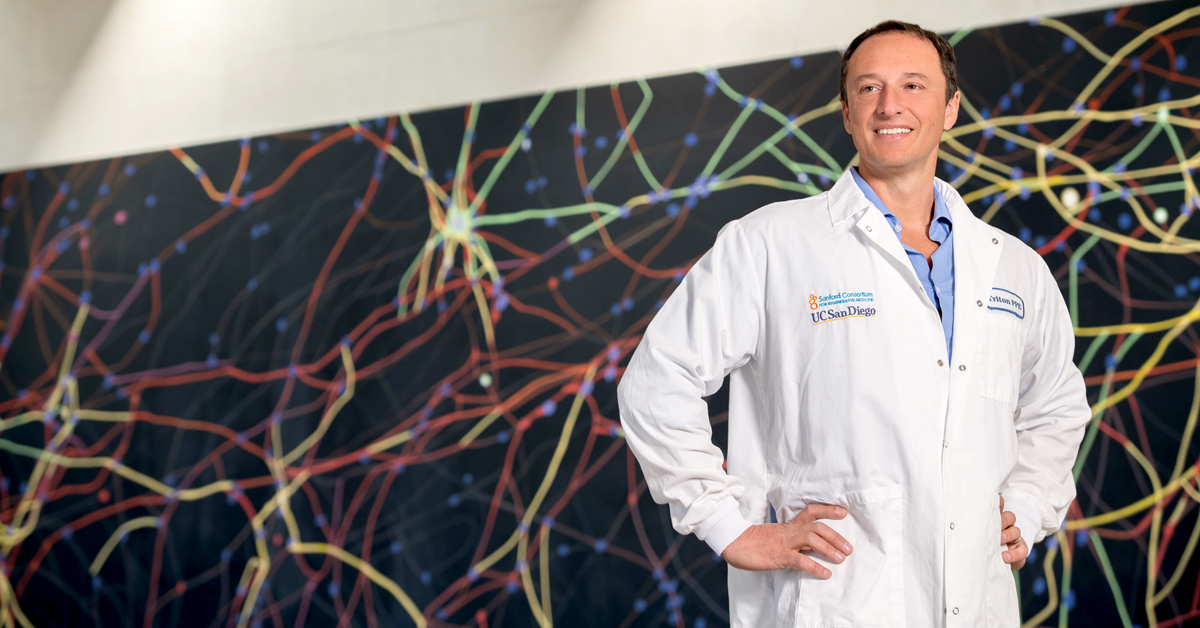

Researchers have developed — and shared — a process for creating brain cortical organoids — essentially miniature artificial brains with functioning neural networks

news, journals and articles from all over the world.

Researchers have developed — and shared — a process for creating brain cortical organoids — essentially miniature artificial brains with functioning neural networks

A new study co-led by Georgia Institute of Technology’s Anna (Anya) Ivanova uncovers the relationship between language and thought in artificial intelligence models like ChatGPT, leveraging cognitive neuroscience research on the human brain. The results are a roadmap to developing new AIs — and to better understanding how we think and communicate.

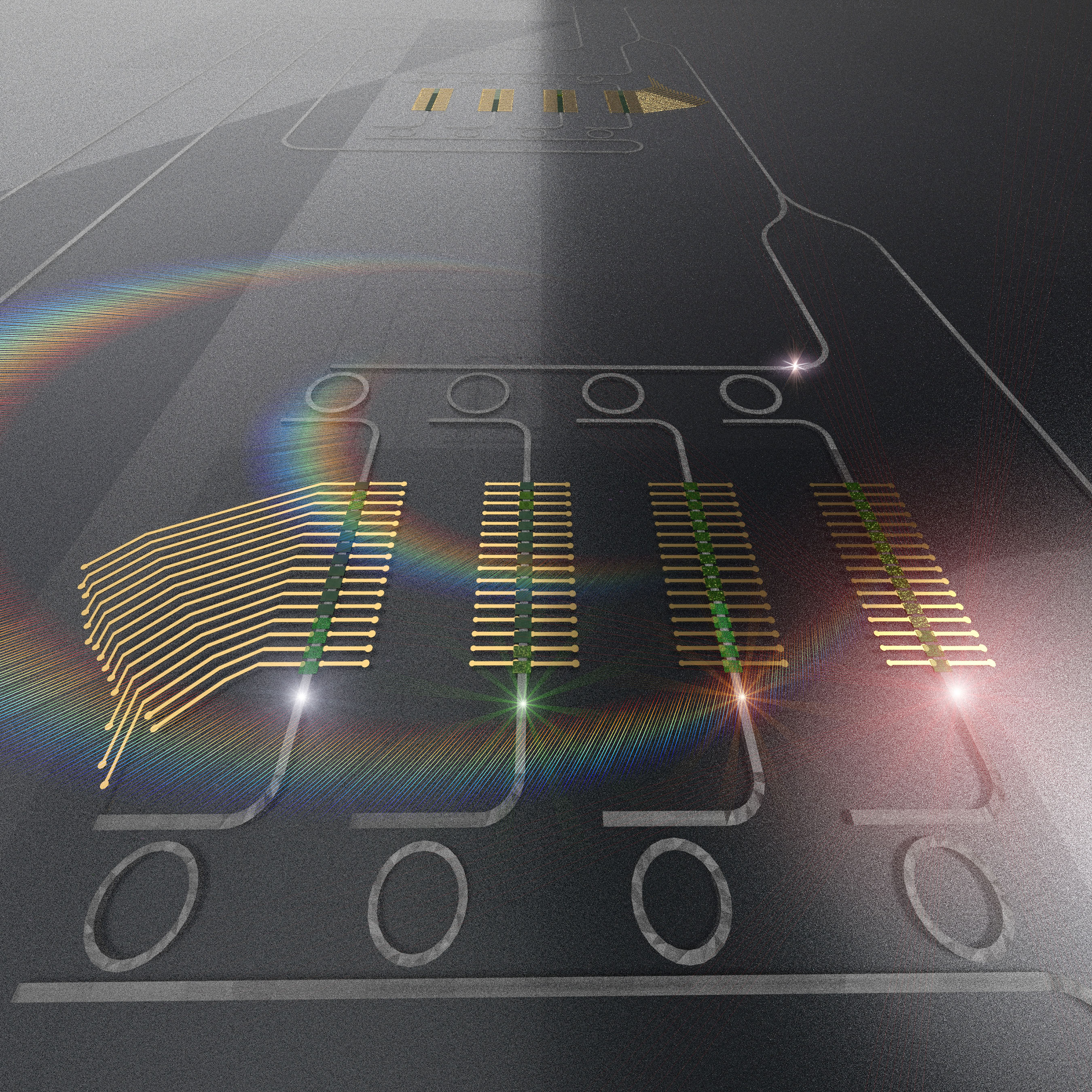

Polarimetry is playing an indispensable role in modern optics with enhanced compact and resolution requirements. Towards this goal, Scientist in China proposed a neural network assisted polarimetry based on a tri-channel chiral metasurface.

Australian researchers have designed an algorithm that can intercept a man-in-the-middle (MitM) cyberattack on an unmanned military robot and shut it down in seconds.

A team of New York University computer scientists has created a neural network that can explain how it reaches its predictions. The work reveals what accounts for the functionality of neural networks—the engines that drive artificial intelligence and machine learning—thereby illuminating a process that has largely been concealed from users.

A team at Los Alamos National Laboratory has developed a novel approach for comparing neural networks that looks within the “black box” of artificial intelligence to help researchers understand neural network behavior. Neural networks recognize patterns in datasets; they are used everywhere in society, in applications such as virtual assistants, facial recognition systems and self-driving cars.

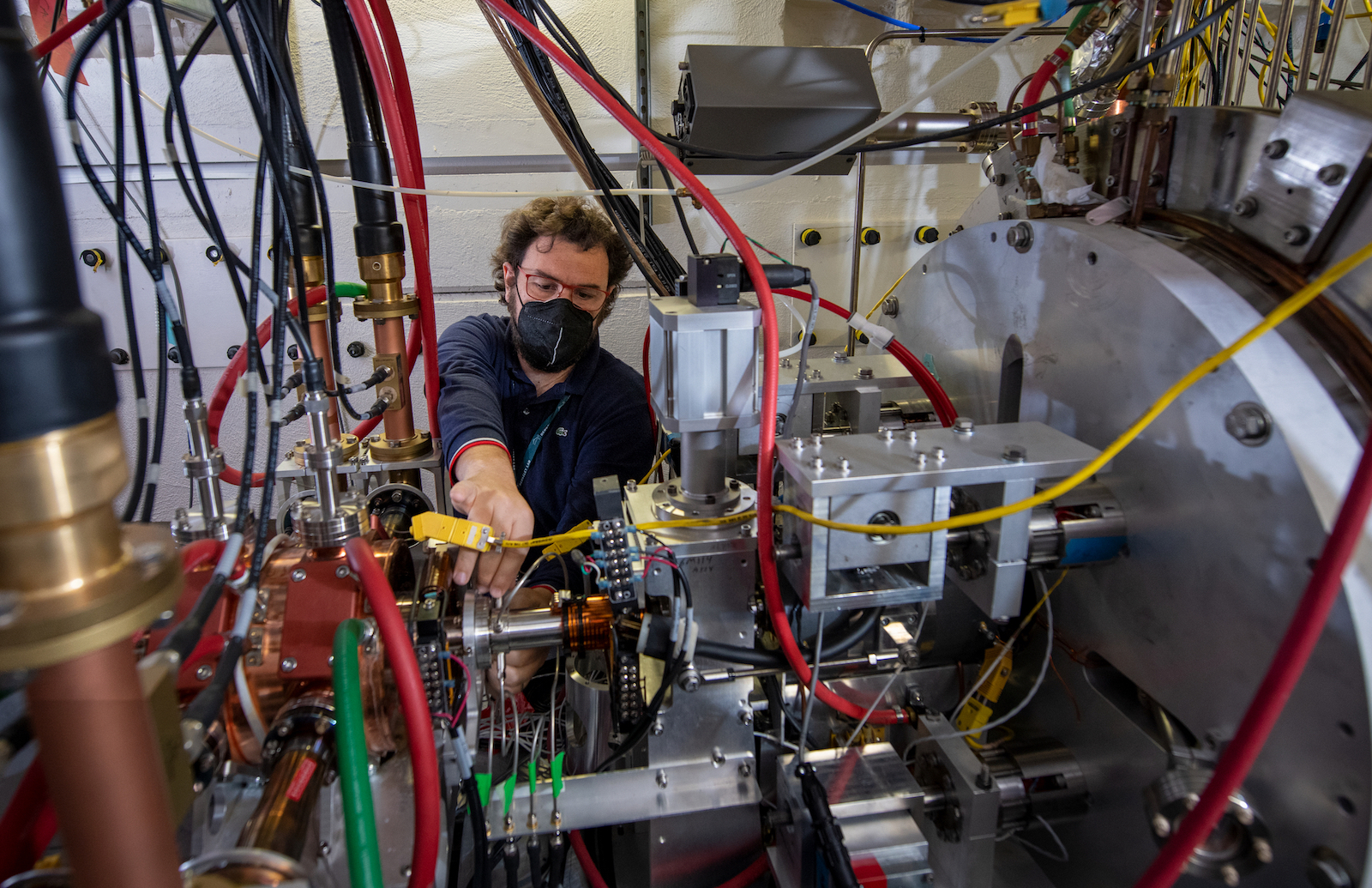

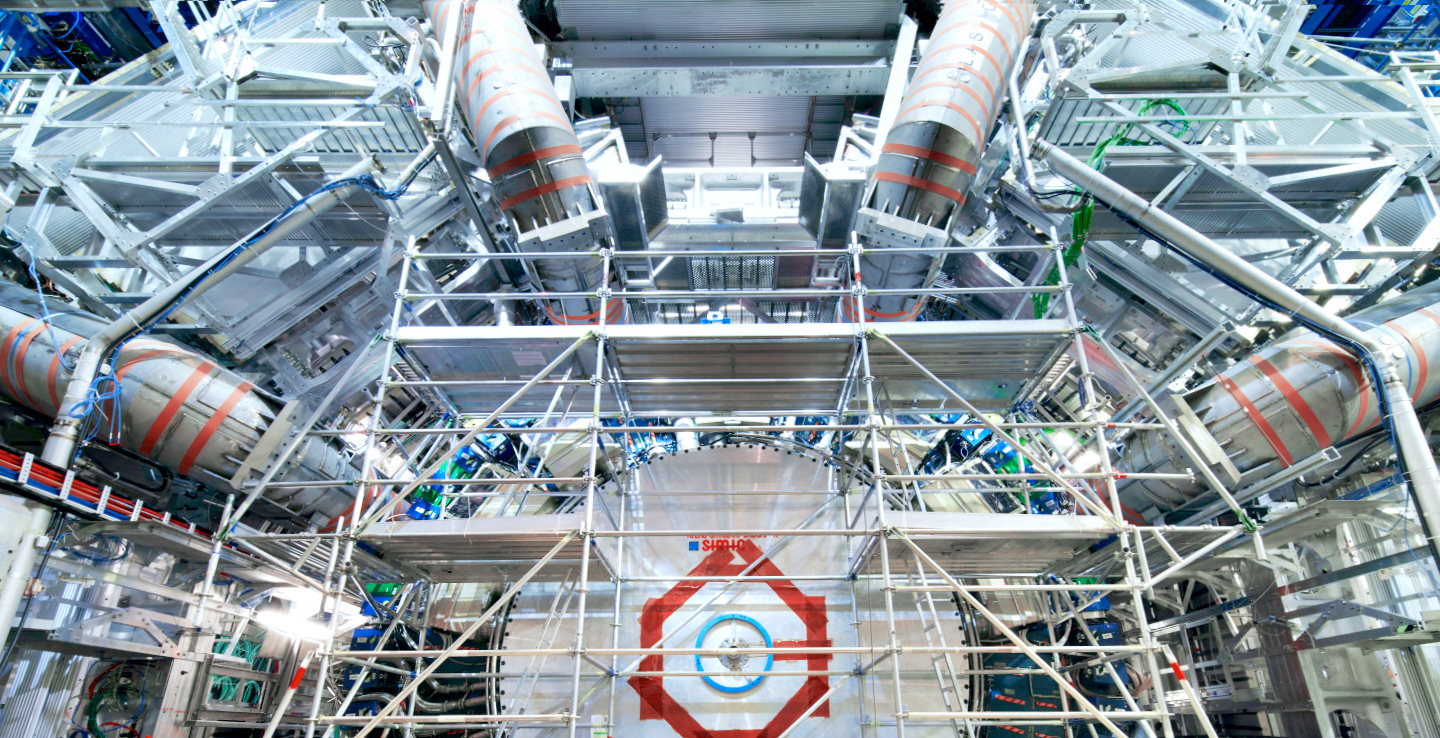

Scientists have developed a new machine-learning platform that makes the algorithms that control particle beams and lasers smarter than ever before. Their work could help lead to the development of new and improved particle accelerators that will help scientists unlock the secrets of the subatomic world.

University of Washington researchers created ClearBuds, earbuds that enhance the speaker’s voice and reduce background noise.

Using biological experiments, robot models, and a geometric theory of locomotion, researchers at the Georgia Institute of Technology investigated how and why intermediate lizard species, with their elongated bodies and short limbs, might use their bodies to move. They uncovered the existence of a previously unknown spectrum of body movements in lizards, revealing a continuum of locomotion dynamics between lizardlike and snakelike movements.

Scientists at the Icahn School of Medicine at Mount Sinai described the creation of a new, automated, artificial intelligence-based algorithm that can learn to read patient data from electronic health records. In a side-by-side comparison, they showed that their method, called Phe2vec (FEE-to-vek), accurately identified patients with certain diseases as well as the traditional, “gold-standard” method, which requires much more manual labor to develop and perform

A team of NIH microscopists and computer scientists used a type of artificial intelligence called a neural network to obtain clearer pictures of cells at work even with extremely low, cell-friendly light levels.

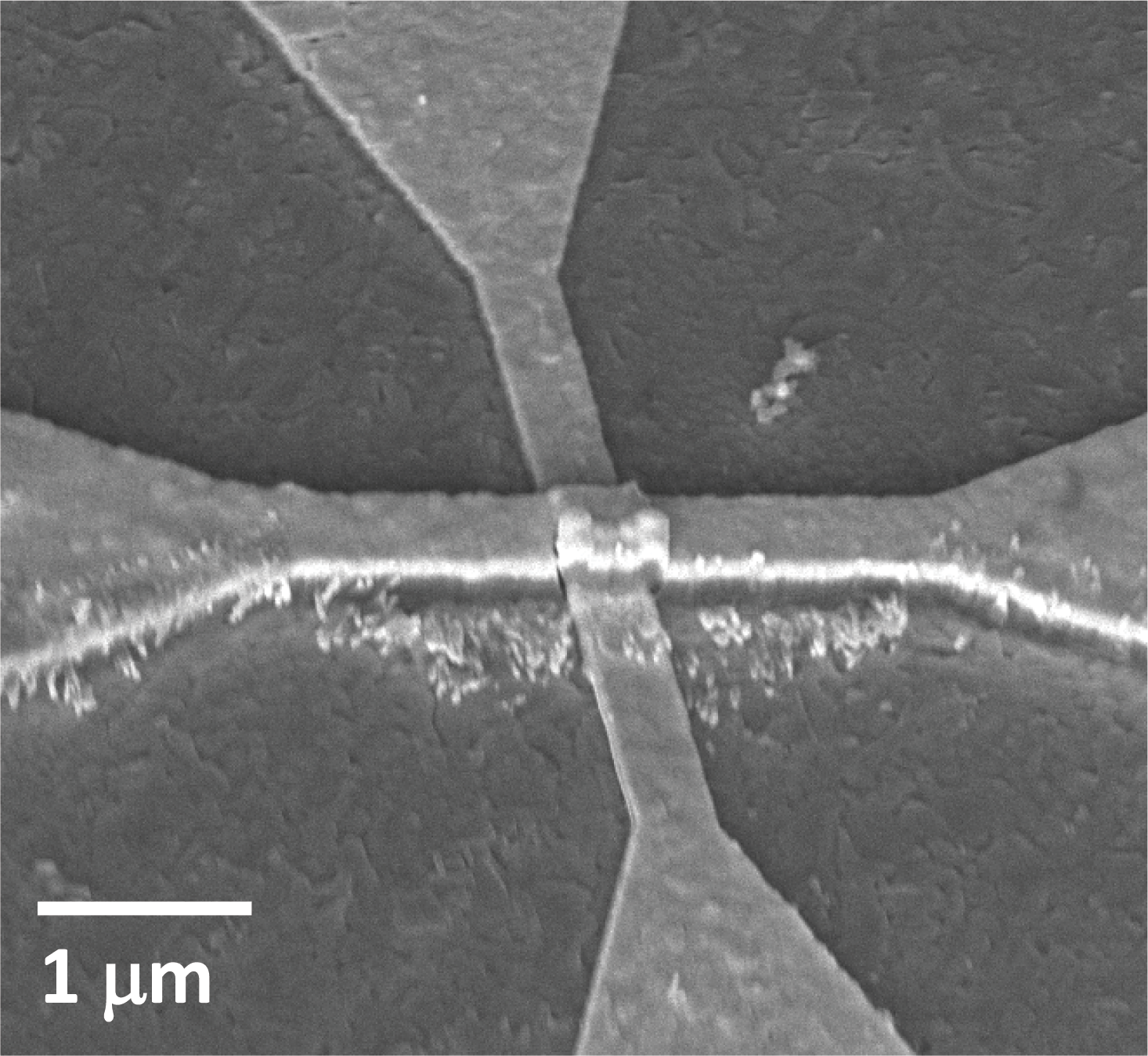

Neural network training could one day require less computing power and hardware, thanks to a new nanodevice that can run neural network computations using 100 to 1000 times less energy and area than existing CMOS-based hardware.

Berkeley Lab researchers participated in a study that used machine learning to scan for new particles in three years of particle-collision data from CERN’s ATLAS detector.

A University of Washington-led team has come up with a system that could help speed up AI performance and find ways to reduce its energy consumption: an optical computing core prototype that uses phase-change material.

SUMMARYResearchers at the George Washington University, together with researchers at the University of California, Los Angeles, and the deep-tech venture startup Optelligence LLC, have developed an optical convolutional neural network accelerator capable of processing large amounts of information, on the…

PNNL’s new Smart Power Grid Simulator, or Smart-PGsim, combines high-performance computing and artificial intelligence to optimize power grid simulations without sacrificing accuracy.

PNNL researchers and university collaborators have developed a system to ferret out questionable use of high-performance computing (HPC) systems.

Machine learning performed by neural networks is a popular approach to developing artificial intelligence, as researchers aim to replicate brain functionalities for a variety of applications. A paper in the journal Applied Physics Reviews proposes a new approach to perform computations required by a neural network, using light instead of electricity. In this approach, a photonic tensor core performs multiplications of matrices in parallel, improving speed and efficiency of current deep learning paradigms.

When it fires, a neuron consumes significantly more energy than an equivalent computer operation. And yet, a network of coupled neurons can continuously learn, sense and perform complex tasks at energy levels that are currently unattainable for even state-of-the-art processors.What does a neuron do to save energy that a contemporary computer processing unit doesn’t?Computer modelling by researchers at Washington University in St.

Ever wonder why your smart phone can do facial recognition, but your smart watch can’t? UD’s Chengmo Yang is researching ways to support neural networks in low-power embedded systems by using emerging memory devices that can retrieve information even when powered off, and furthermore minimize errors.