Since 2019, a team of NASA scientists and their partners have been using NASA’s FUN3D software on supercomputers located at the Department of Energy’s Oak Ridge Leadership Computing Facility, or OLCF, to conduct computational fluid dynamics, or CFD, simulations of a human-scale Mars lander. The team’s ongoing research project is a first step in determining how to safely land a vehicle with humans onboard onto the surface of Mars.

Tag: Supercomputing

Deep learning speeds up galactic calculations

A new way to simulate supernovae may help shed light on our cosmic origins

Novel Framework Improves the Efficiency of Complex Supercomputer Physics Calculations

Some types of quantum chromodynamics (QCD) calculations are so complex they strain even supercomputers. To speed these calculations, researchers developed MemHC, an optimized memory framework.

Drug discovery on an unprecedented scale

Boosting virtual screening with machine learning allowed for a 10-fold time reduction in the processing of 1.56 billion drug-like molecules. Researchers from the University of Eastern Finland teamed up with industry and supercomputers to carry out one of the world’s largest virtual drug screens.

LLNL scientists among finalists for new Gordon Bell climate modeling award

A team from Lawrence Livermore and seven other Department of Energy (DOE) national laboratories is a finalist for the new Association for Computing Machinery (ACM) Gordon Bell Prize for Climate Modeling for running an unprecedented high-resolution global atmosphere model on the world’s first exascale supercomputer.

Modeling climate extremes

Researchers from Oak Ridge National Laboratory and Northeastern University modeled how extreme conditions in a changing climate affect the land’s ability to absorb atmospheric carbon — a key process for mitigating human-caused emissions.

James Barr von Oehsen Named Director of the Pittsburgh Supercomputing Center

James Barr von Oehsen has been selected as the director of the Pittsburgh Supercomputing Center (PSC), a joint research center of Carnegie Mellon University and the University of Pittsburgh. Von Oehsen is a leader in the fields of cyberinfrastructure, research computing, advanced networking, data science and information technology.

Celeritas code will accelerate high energy physics simulations with supercomputers

Scientists at the Department of Energy’s Oak Ridge National Laboratory are leading a new project to ensure that the fastest supercomputers can keep up with big data from high energy physics research.

Nuclear Physics Gets a Boost for High-Performance Computing

Efforts to harness the power of supercomputers to better understand the hidden worlds inside the nucleus of the atom recently received a big boost. A project led by the U.S. Department of Energy’s (DOE’s) Thomas Jefferson National Accelerator Facility is one of three to split $35 million in grants from the DOE via a partnership program of DOE’s Scientific Discovery through Advanced Computing (SciDAC). The $13 million project includes key scientists based at six DOE national labs and two universities, including Jefferson Lab, Argonne National Lab, Brookhaven National Lab, Oak Ridge National Lab, Lawrence Berkeley National Lab, Los Alamos National Lab, Massachusetts Institute of Technology and William & Mary.

Reducing Redundancy to Accelerate Complicated Computations

Computers help physicists solve complicated calculations. But some of these calculations are so complex, a regular computer is not enough. In fact, some advanced calculations tax even the largest supercomputers. Now, scientists at Jefferson Lab and William & Mary have developed MemHC, a new tool that uses memory optimization methods to allow GPU-based computers to calculate the structures of neutrons and protons ten times faster.

PSC Receives Honors for AI-Driven, Automated Discovery of MRI Agents and Control of Fluid-Flow Heat and Stress

Science performed with the Pittsburgh Supercomputing Center’s advanced research computers has been recognized with two HPCwire Editors’ Choice Awards, presented at the SC22 conference in Dallas, Texas.

Machine learning helps scientists peer (a second) into the future

The past may be a fixed and immutable point, but with the help of machine learning, the future can at times be more easily divined.

Unveiling the Existence of the Elusive Tetraneutron

Nuclear physicists have experimentally confirmed the existence of the tetraneutron, a meta-stable nuclear system that can decay into four free neutrons. Researchers have predicted the tetraneutron’s existence since 2016. The new results, which agree with predictions from supercomputer simulations, will help scientists understand atomic nuclei, neutron stars, and other neutron-rich systems.

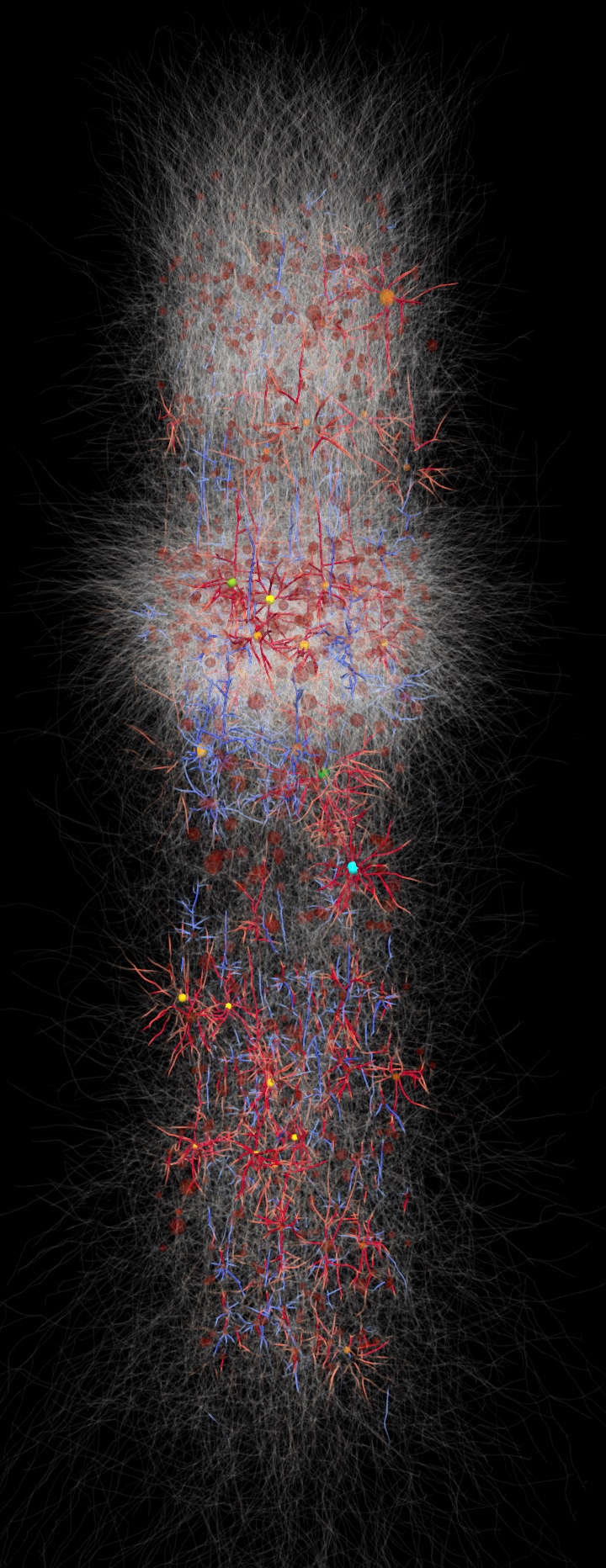

Neuroscience Simulations at NERSC Shed Light on Origins of Human Brain Recordings

Using simulations run at the National Energy Research Scientific Computing Center, a team of researchers at Lawrence Berkeley National Laboratory has found the origin of cortical surface electrical signals in the brain and discovered why the signals originate where they do.

Researchers reveal the origin story for carbon-12, a building block for life

After running simulations on the world’s most powerful supercomputer, an international team of researchers has developed a theory for the nuclear structure and origin of carbon-12, the stuff of life. The theory favors the production of carbon-12 in the cosmos.

Supercomputing, neutrons crack code to uranium compound’s signature vibes

Oak Ridge National Laboratory researchers used the nation’s fastest supercomputer to map the molecular vibrations of an important but little-studied uranium compound produced during the nuclear fuel cycle for results that could lead to a cleaner, safer world.

PSC and Partners to Lead $7.5-Million Project to Allocate Access on NSF Supercomputers

The NSF has awarded $7.5 million over five years to the RAMPS project, a next-generation system for awarding computing time in the NSF’s network of supercomputers. RAMPS is led by the Pittsburgh Supercomputing Center and involves partner institutions in Colorado and Illinois.

U.S. Department of Energy to Showcase National Lab Expertise at SC21

The scientific computing and networking leadership of the U.S. Department of Energy’s (DOE’s) national laboratories will be on display at SC21, the International Conference for High-Performance Computing, Networking, Storage and Analysis. The conference takes place Nov. 14-19 in St. Louis via a combination of on-site and online resources.

Argonne and Oak Ridge National Laboratories award Codeplay software

Argonne National Laboratory (Argonne) in collaboration with Oak Ridge National Laboratory (ORNL), has awarded Codeplay a contract implementing the oneAPI DPC++ compiler, an implementation of the SYCL open standard software, to support AMD GPU-based high-performance compute (HPC) supercomputers.

LLNL team looks at nuclear weapon effects for near-surface detonations

A Lawrence Livermore National Laboratory team has taken a closer look at how nuclear weapon blasts close to the Earth’s surface create complications in their effects and apparent yields. Attempts to correlate data from events with low heights of burst revealed a need to improve the theoretical treatment of strong blast waves rebounding from hard surfaces.

Physicists Crack the Code to Signature Superconductor Kink Using Supercomputing

A team performed simulations on the Summit supercomputer and found that electrons in cuprates interact with phonons much more strongly than was previously thought, leading to experimentally observed “kinks” in the relationship between an electron’s energy and the momentum it carries.

FAU Unveils Center for Connected Autonomy and Artificial Intelligence

To rapidly advance the field of artificial intelligence and autonomy, FAU’s College of Engineering and Computer Science recently unveiled its “Center for Connected Autonomy and Artificial Intelligence.”

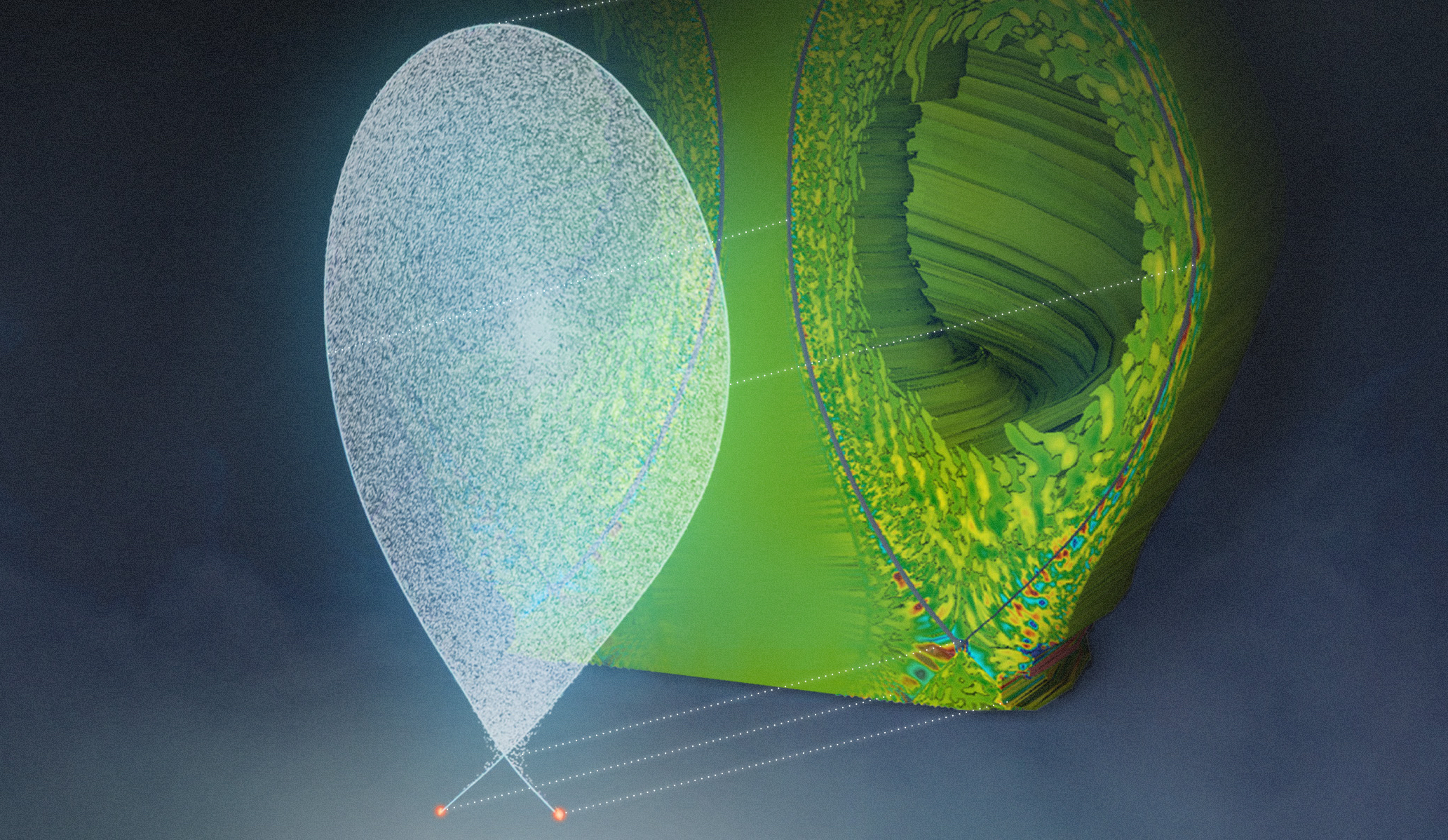

HPC Explorations of Supernova Explosions Help Physicists Reach New Milestones

Physicists have been studying the question of how supernova explosions occur for more than 60 years. Thanks to the increasing power of supercomputing resources such as those at the National Energy Research Scientific Computing Center at Lawrence Berkeley National Laboratory, they’re moving ever closer to an answer.

Scientists Use Supercomputers to Study Reliable Fusion Reactor Design, Operation

A team used two DOE supercomputers to complete simulations of the full-power ITER fusion device and found that the component that removes exhaust heat from ITER may be more likely to maintain its integrity than was predicted by the current trend of fusion devices.

Supercomputers Aid Scientists Studying the Smallest Particles in the Universe

Using the nation’s fastest supercomputer, Summit at Oak Ridge National Laboratory, a team of nuclear physicists developed a promising method for measuring quark interactions in hadrons and applied the method to simulations using quarks with close-to-physical masses.

Designing Materials from First Principles with Yuan Ping

The UC Santa Cruz professor uses computing resources at Brookhaven Lab’s Center for Functional Nanomaterials to run calculations for quantum information science, spintronics, and energy research.

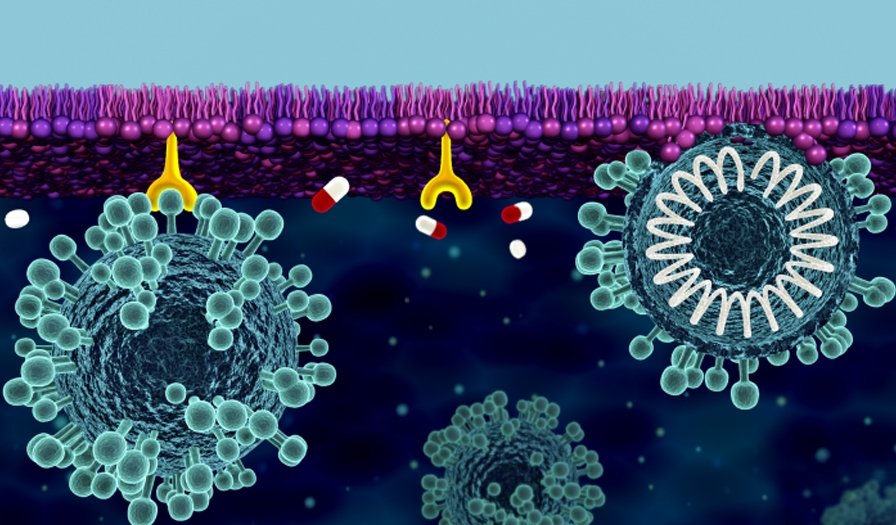

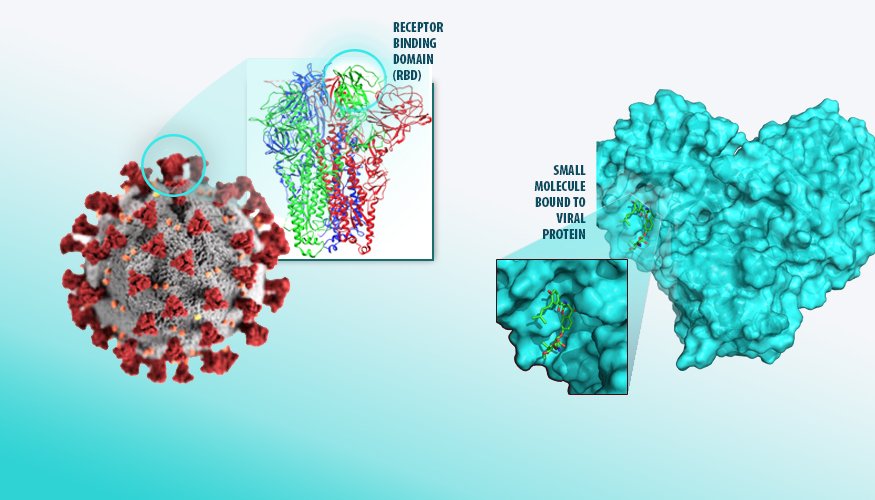

Mobilizing Science to Tackle COVID-19

Responding to COVID-19 has required a huge coordinated effort from the scientific community. The Department of Energy’s Office of Science has spearheaded several scientific efforts, including the National Virtual Biotechnology Laboratory.

Kalyan R. Perumalla: Then and Now / 2010 Early Career Award Winner

Kalyan R S Perumalla is a Distinguished Research and Development Staff Member at Oak Ridge National Laboratory, whose work on reversible computing for exascale computers also provides insights applicable to next generation programming.

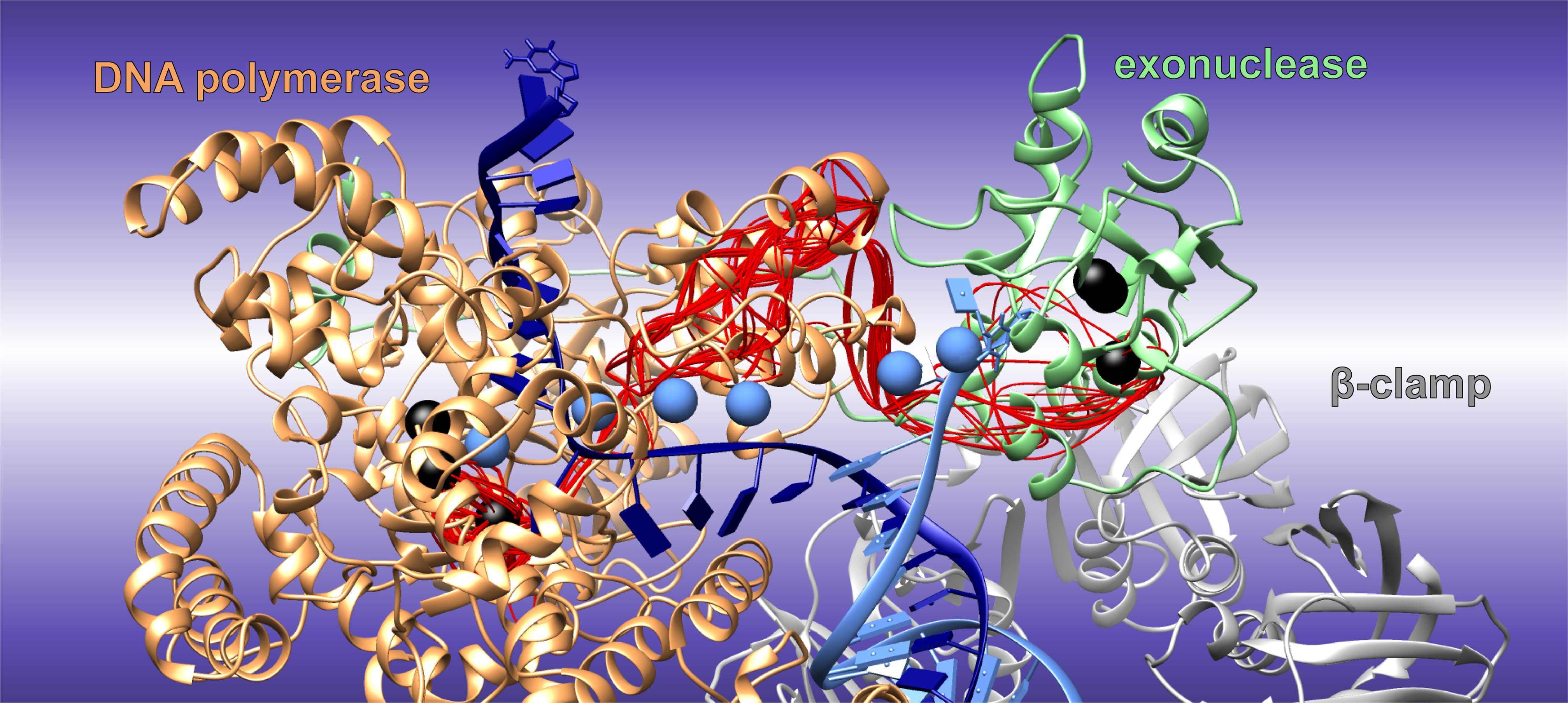

Simulations Reveal Nature’s Design for Error Correction During DNA Replication

A Georgia State University team has used the nation’s fastest supercomputer, Summit at the US Department of Energy’s Oak Ridge National Laboratory, to find the optimal transition path that one E. coli enzyme uses to switch between building and editing DNA to rapidly remove misincorporated pieces of DNA.

Machine learning model for COVID-19 drug discovery is a Gordon Bell finalist

A machine learning model developed by a team of Lawrence Livermore National Laboratory (LLNL) scientists to aid in COVID-19 drug discovery efforts is a finalist for the Gordon Bell Special Prize for High Performance Computing-Based COVID-19 Research.

Story Tips: Ice breaker data, bacterial breakdown, catching heat and finding order

ORNL story tips: Ice breaker data, bacterial breakdown, catching heat and finding order

Mammoth “big memory” computing cluster to aid in COVID-19 research

Lawrence Livermore National Laboratory and its partners AMD, Supermicro and Cornelis Networks have installed a new high performance computing (HPC) cluster with memory and data storage capabilities optimized for data-intensive COVID-19 research and pandemic response.

AI gets a boost via LLNL, SambaNova collaboration

Lawrence Livermore National Laboratory (LLNL) has installed a state-of-the-art artificial intelligence (AI) accelerator from SambaNova Systems, the National Nuclear Security Administration (NNSA) announced today, allowing researchers to more effectively combine AI and machine learning (ML) with complex scientific workloads.

Preparing for science in the exascale era

As the future home to the Aurora exascale system, Argonne National Laboratory has been ramping up efforts to ready the supercomputer and its future users for science in the exascale era.

CARES Act funds major upgrade to Corona supercomputer for COVID-19 work

With funding from the Coronavirus Aid, Relief and Economic Security (CARES) Act, Lawrence Livermore National Laboratory, chipmaker AMD and information technology company Supermicro have upgraded the supercomputing cluster Corona, providing additional resources to scientists for COVID-19 drug discovery and vaccine research

LLNL to provide supercomputing resources to universities selected by NNSA Advanced Simulation and Computing program

Lawrence Livermore National Laboratory (LLNL) will provide significant computing resources to students and faculty from nine universities that were newly selected for participation in the National Nuclear Security Administration (NNSA)’s Predictive Science Academic Alliance Program (PSAAP).

New Calculation Refines Comparison of Matter with Antimatter

An international collaboration of theoretical physicists has published a new calculation relevant to the search for an explanation of the predominance of matter over antimatter in our universe. The new calculation gives a more accurate prediction for the likelihood with which kaons decay into a pair of electrically charged pions vs. a pair of neutral pions.

LLNL pairs world’s largest computer chip from Cerebras with “Lassen” supercomputer to accelerate AI research

Lawrence Livermore National Laboratory (LLNL) and artificial intelligence computer company Cerebras Systems have integrated the world’s largest computer chip into the National Nuclear Security Administration’s (NNSA’s) Lassen system, upgrading the top-tier supercomputer with cutting-edge AI technology.

NSF Grant Backs funcX — A Smart, Automated Delegator for Computational Research

Computational scientific research is no longer one-size-fits-all. The massive datasets created by today’s cutting-edge instruments and experiments — telescopes, particle accelerators, sensor networks and molecular simulations — aren’t best processed and analyzed by a single type of machine.

Computational gene study suggests new pathway for COVID-19 inflammatory response

A team led by Dan Jacobson of the Department of Energy’s Oak Ridge National Laboratory used the Summit supercomputer at ORNL to analyze genes from cells in the lung fluid of nine COVID-19 patients compared with 40 control patients.

Love-hate relationship of solvent and water leads to better biomass breakup

Scientists at the Department of Energy’s Oak Ridge National Laboratory used neutron scattering and supercomputing to better understand how an organic solvent and water work together to break down plant biomass, creating a pathway to significantly improve the production of renewable biofuels and bioproducts.

Summit Helps Predict Molecular Breakups

A team used the Summit supercomputer to simulate transition metal systems—such as copper bound to molecules of nitrogen, dihydrogen, or water—and correctly predicted the amount of energy required to break apart dozens of molecular systems, paving the way for a greater understanding of these materials.

Preparing for exascale: LLNL breaks ground on computing facility upgrades

To meet the needs of tomorrow’s supercomputers, the National Nuclear Security Administration’s (NNSA’s) Lawrence Livermore National Laboratory (LLNL) has broken ground on its Exascale Computing Facility Modernization (ECFM) project, which will substantially upgrade the mechanical and electrical capabilities of the Livermore Computing Center.

Knocking Out Drug Side Effects with Supercomputing

A team at Stanford University used the OLCF’s Summit supercomputer to compare simulations of a G protein-coupled receptor with different molecules attached to gain an understanding of how to minimize or eliminate side effects in drugs that target these receptors.

Supercomputing Aids Scientists Seeking Therapies for Deadly Bacterial Disease

A team of scientists led by Abhishek Singharoy at Arizona State University used the Summit supercomputer at the Oak Ridge Leadership Computing Facility to simulate the structure of a possible drug target for the bacterium that causes rabbit fever.

Story Tips: Mining for COVID, rules to grow by and the 3D connection

ORNL story Tips: Mining for COVID, rules to grow by and the 3D connection

Simulations forecast nationwide increase in human exposure to extreme climate events

Using ORNL’s now-decommissioned Titan supercomputer, a team of researchers estimated the combined consequences of many different extreme climate events at the county level, a unique approach that provided unprecedented regional and national climate projections that identified the areas most likely to face climate-related challenges.

Four Years of Calculations Lead to New Insights into Muon Anomaly

Two decades ago, an experiment at Brookhaven National Laboratory pinpointed a mysterious mismatch between established particle physics theory and actual lab measurements. A multi-institutional research team (including Brookhaven, Columbia University, and the universities of Connecticut, Nagoya and Regensburg, RIKEN) have used Argonne National Laboratory’s Mira supercomputer to help narrow down the possible explanations for the discrepancy, delivering a newly precise theoretical calculation that refines one piece of this very complex puzzle.

Major upgrades of particle detectors and electronics prepare CERN experiment to stream a data tsunami

For an experiment that will generate big data at unprecedented rates, physicists led design, development, mass production and delivery of an upgrade of novel particle detectors and state-of-the art electronics.

Advanced software framework expedites quantum-classical programming

An ORNL team developed the XACC software framework to help researchers harness the potential power of quantum processing units, or QPUs. XACC offloads portions of quantum-classical computing workloads from the host CPU to an attached quantum accelerator, which calculates results and sends them back to the original system.