A new University of Washington study, published May 21 in Developmental Science, is the first to compare the amount of music and speech that children hear in infancy. Results showed that infants hear more spoken language than music, with the gap widening as the babies get older.

Tag: Speech

Machine Listening: Making Speech Recognition Systems More Inclusive

One group commonly misunderstood by voice technology are individuals who speak African American English, or AAE.

twist on theatre sports could counteract a stutter

Mock ‘Ninja knife throwing’, ‘Gibberish’, or the fast and furious ‘Zap’ – they’re all favourite theatre games designed to break ice and boost confidence. But add speech therapy to theatre sports and you get a brand-new experience that’s hoping to deliver positive changes for people with a stutter.

Why do we articulate more when speaking to babies and puppies?

Babies and puppies have at least two things in common: aside from being newborns, they promote a positive emotional state in human mothers, leading them to articulate better when they speak.

Babies talk more around man-made objects than natural ones

A new study, led by the University of Portsmouth, suggests young children are more vocal when interacting with toys and household items, highlighting their importance for developing language skills.

Hackensack Meridian Health Invests in Canary Speech, Company with AI Software to Assess Anxiety, Wellness in Spoken Words

Hackensack Meridian Health and its Bear’s Den program invest in company to help detect potential health problems hinted in speech patterns

Vocal Tract Size, Shape Dictate Speech Sounds

In JASA, researchers explore how anatomical variations in a speaker’s vocal tract affect speech production. Using MRI, the team recorded the shape of the vocal tract for 41 speakers as the subjects produced a series of representative speech sounds. They averaged these shapes to establish a sound-independent model of the vocal tract. Then they used statistical analysis to extract the main variations between speakers. A handful of factors explained nearly 90% of the differences between speakers.

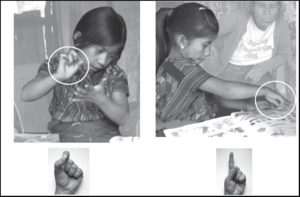

The Self-Taught Vocabulary of Homesigning Deaf Children Supports Universal Constraints on Language

Thousands of languages spoken throughout the world draw on many of the same fundamental linguistic abilities and reflect universal aspects of how humans categorize events. Some aspects of language may also be universal to people who create their own sign languages.

Bot gives nonnative speakers the floor in videoconferencing

Native speakers often dominate the discussion in multilingual online meetings, but adding an automated participant that periodically interrupts the conversation can help nonnative speakers get a word in edgewise, according to new research at Cornell.

GW Expert on President Biden’s Thursday Primetime Speech

President Biden will deliver an address to the American people from Philadelphia’s Independence Hall tomorrow night. The White House says the speech will focus on “the continued battle for the Soul of the Nation,” a topic that Biden has…

Link between recognizing our voice and feeling in control

New study on our connection to our voice contributes to better understanding of auditory hallucinations and could improve VR experiences.

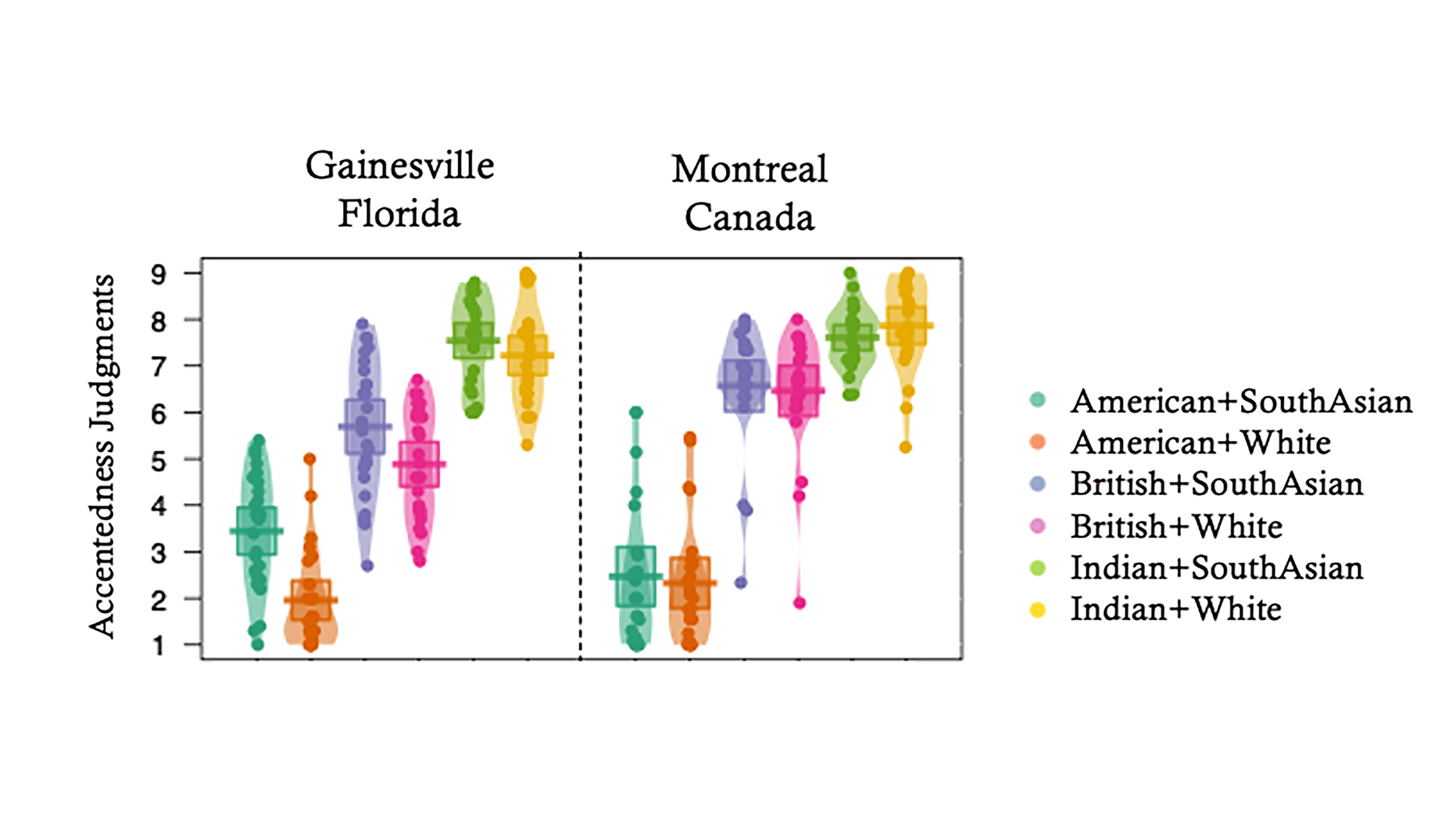

Diverse Social Networks Reduce Accent Judgments #ASA182

Everyone has an accent. But the intelligibility of speech doesn’t just depend on that accent; it also depends on the listener. Visual cues and the diversity of the listener’s social network can impact their ability to understand and transcribe sentences after listening to the spoken word.

Researchers have developed a Russian-language method for the preoperative mapping of language areas

Neurolinguists from HSE University, in collaboration with radiologists from the Pirogov National Medical and Surgical Centre, developed a Russian-language protocol for functional magnetic resonance imaging (fMRI) that makes it possible to map individual language areas before neurosurgical operations.

Happy stories synch brain activity more than sad stories

Successful storytelling can synchronize brain activity between the speaker and listener, but not all stories are created equal.

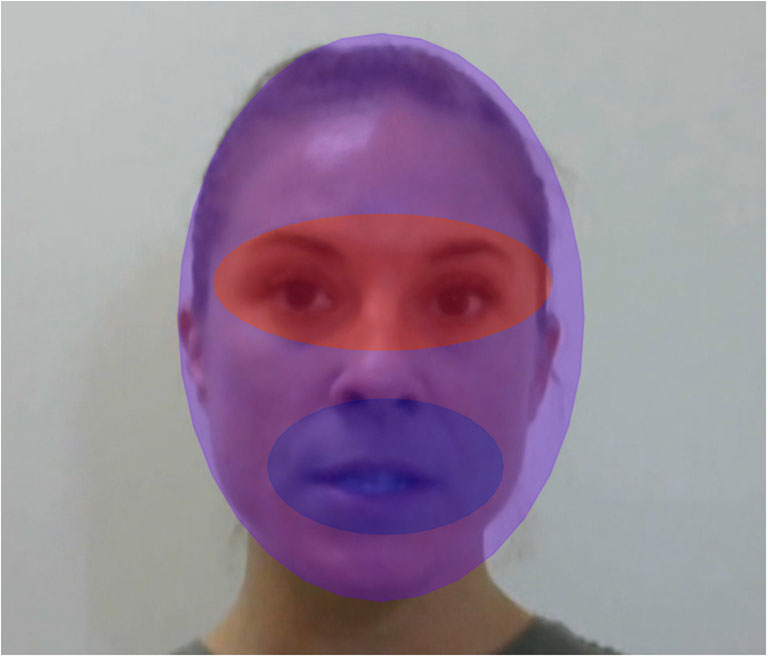

To Better Understand Speech, Focus on Who Is Talking

Researchers have found that matching the locations of faces with the speech sounds they are producing significantly improves our ability to understand them, especially in noisy areas where other talkers are present. In the Journal of the Acoustical Society of America, they outline a set of online experiments that mimicked aspects of distracting scenes to learn more about how we focus on one audio-visual talker and ignore others.

What a song reveals about vocal imitation deficits for autistic individuals

A new paper comparing the ability to match pitch and duration in speech and song is providing valuable insight into vocal imitation deficits for children and adults with autism spectrum disorder.

Does Visual Feedback of Our Tongues Help in Speech Motor Learning?

When we speak, we use our auditory and somatosensory systems to monitor the results of the movements of our tongue or lips. Since we cannot typically see our own faces and tongues while we speak, however, the potential role of visual feedback has remained less clear. In the Journal of the Acoustical Society of America, researchers explore how readily speakers will integrate visual information about their tongue movements during a speech motor learning task.

‘Talking Drum’ Shown to Accurately Mimic Speech Patterns of West African Language

Musicians such as Jimi Hendrix and Eric Clapton are considered virtuosos, guitarists who could make their instruments sing.

Fit kids, fat vocabularies

A recent study by University of Delaware researchers suggests exercise can boost kids’ vocabulary growth. The article, published in the Journal of Speech Language and Hearing Research, details one of the first studies on the effect of exercise on vocabulary learning in children.

Human Voice Recognition AI Now a reality — “Thai Speech Emotion Recognition Data Sets and Models” Now Free to Download

Chulalongkorn University’s Faculty of Engineering and the Faculty of Arts have jointly developed the “Thai Speech Emotion Recognition Data Sets and Models”, now available for free downloads, to help enhance sales operations and service systems to better respond to customers’ needs.

Potential Vocal Tracking App Could Detect Depression Changes

According to the World Health Organization, more than 264 million people worldwide have Major Depression Disorder and another 20 million have schizophrenia. During the 180th ASA Meeting, Carol Espy-Wilson from the University of Maryland,will discuss how a person’s mental health status is reflected in the coordination of speech gestures. The keynote lecture, “Speech Acoustics and Mental Health Assessment,” will take place Tuesday, June 8.

Voice Acting Unlocks Speech Production, Therapy Knowledge

Many voice actors use a variety of speech vocalizations and patterns to create unique and memorable characters. How they create those amazing voices could help speech pathologists better understand the muscles involved for creating words and sounds. During the 180th ASA Meeting, Colette Feehan from Indiana University will talk about how voice actor performances can lead to better understanding about the speech muscles under our control. The session, “Articulatory and acoustic phonetics of voice actors,” will take place Tuesday, June 8.

Acoustics in Focus: Virtual Press Conference Schedule for 180th Meeting of Acoustical Society of America

Press conferences at the 180th ASA Meeting will cover the latest in acoustical research during the Acoustics in Focus meeting. The virtual press conferences will take place each day of the meeting and offer reporters and outlets the opportunity to hear key presenters talk about their research. To ensure the safety of attendees, volunteers, and ASA staff, Acoustics in Focus will be hosted entirely online.

Save-the-Date: Acoustics in Focus, June 8-10, Offers New Presentation Options

The Acoustical Society of America will hold its 180th meeting June 8-10. To ensure the safety of attendees, volunteers, and ASA staff, the June meeting, “Acoustics in Focus,” will be hosted entirely online with new features to ensure an exciting experience for attendees. Reporters are invited to attend the meeting at no cost and participate in a series of virtual press conferences featuring a selection of newsworthy research.

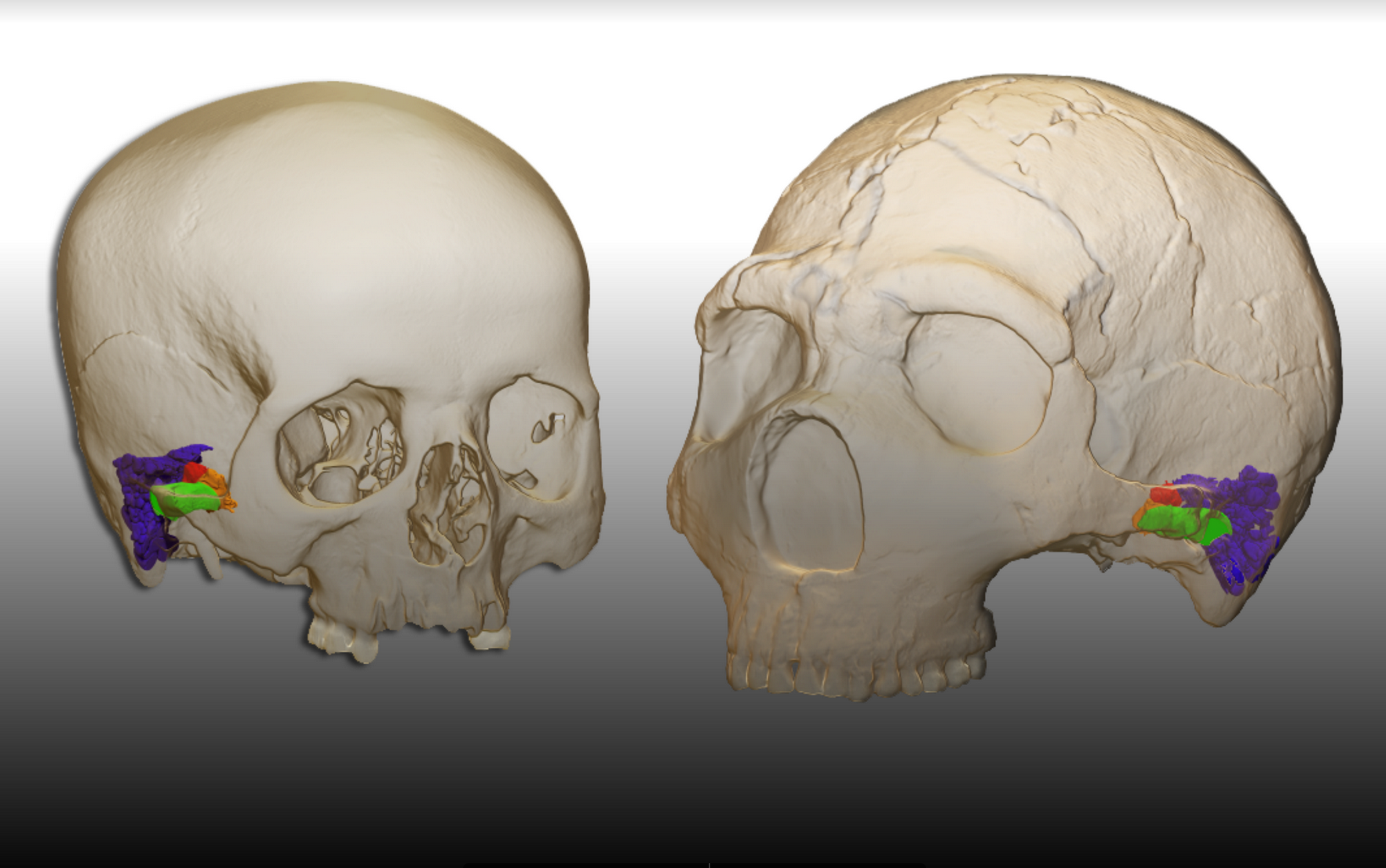

Neandertals had the capacity to perceive and produce human speech

Neandertals — the closest ancestor to modern humans — possessed the ability to perceive and produce human speech, according to a new study published by an international multidisciplinary team of researchers including Binghamton University anthropology professor Rolf Quam and graduate student Alex Velez.

Joe Biden gives hopes to millions who stutter

Recently inaugurated President Joe Biden is a positive role model to people who stutter and will raise awareness of stuttering worldwide, according to Rodney Gabel, stuttering expert and professor at Binghamton University, State University of New York. He also serves…

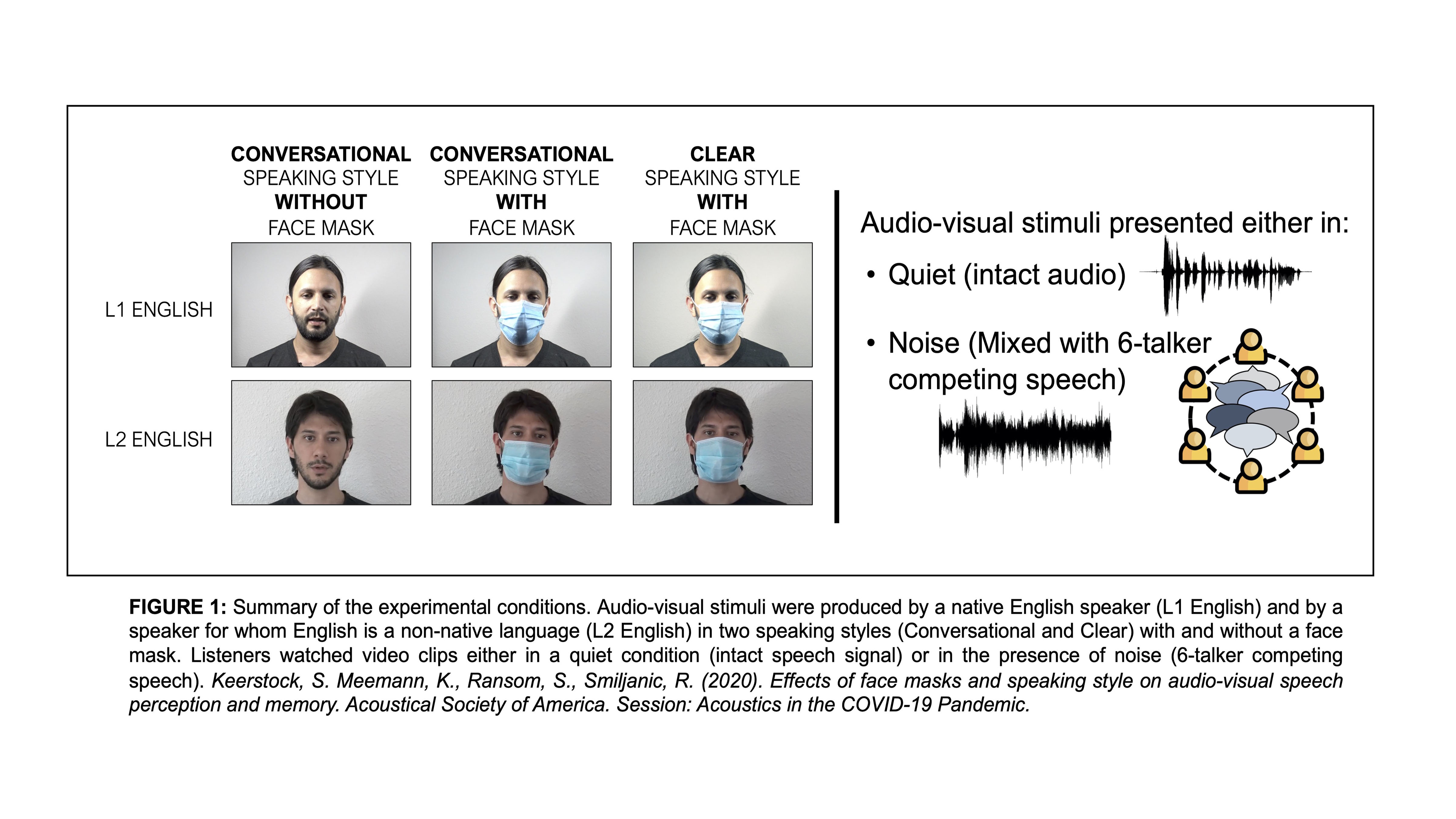

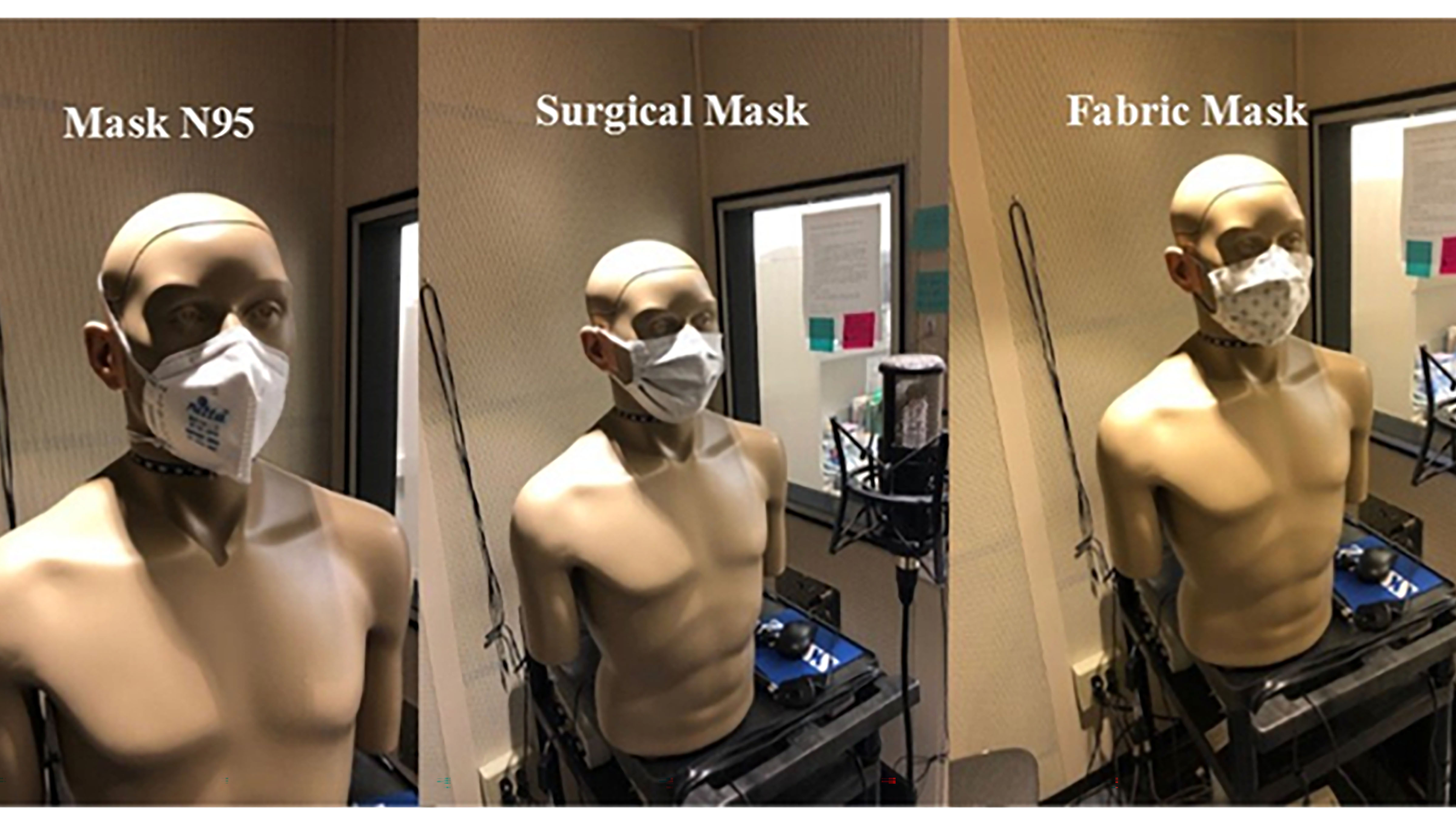

Face Masks Provide Additional Communication Barrier for Nonnative Speech

Though face masks are important and necessary for controlling the spread of the new coronavirus, they result in muffled speech and a loss of visual cues during communication. Sandie Keerstock, Rajka Smiljanic, and their colleagues examine how this loss of visual information impacts speech intelligibility and memory for native and nonnative speech. They will discuss these communication challenges and how to address them at the 179th ASA Meeting, Dec. 7-10

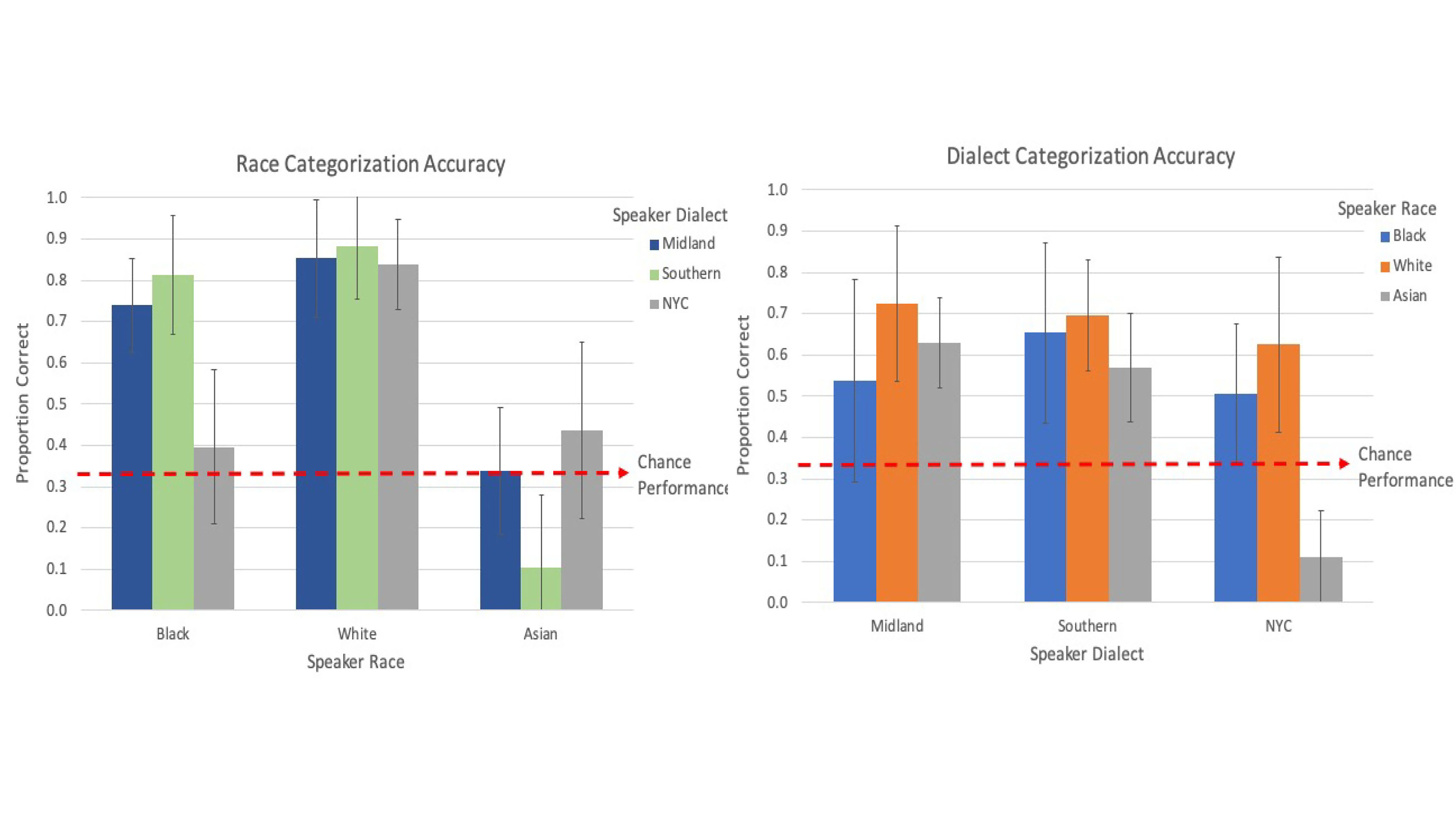

How Much Does the Way You Speak Reveal About You?

Listeners can extract a lot of information about a person from their acoustic speech signal. During the 179th ASA Meeting, Dec. 7-10, Tessa Bent, Emerson Wolff, and Jennifer Lentz will describe their study in which listeners were told to categorize 144 unique audio clips of monolingual English talkers into Midland, New York City, and Southern U.S. dialect regions, and Asian American, Black/African American, or white speakers.

Masked Education: Which Face Coverings are Best for Student Comprehension?

With the ubiquity of masks due to the coronavirus pandemic, understanding speech has become difficult. This especially applies in classroom settings, where the presence of a mask and the acoustics of the room have an impact on students’ comprehension. Pasquale Bottalico has been studying the effects of masks on communication. He will discuss his findings on the best way to overcome hurdles in classroom auditory perception caused by facial coverings at the 179th ASA Meeting.

Accent Perception Depends on Backgrounds of Speaker, Listener

Visual cues can change listeners’ perception of others’ accents, and people’s past exposure to varied speech can also impact their perception of accents. Ethan Kutlu will discuss his team’s work testing the impact that visual input and linguistic diversity has on listeners’ perceived accentedness judgments in two different locations: Gainesville, Florida, and Montreal, Canada. The session will take place Dec. 9 as part of the 179th ASA Meeting.

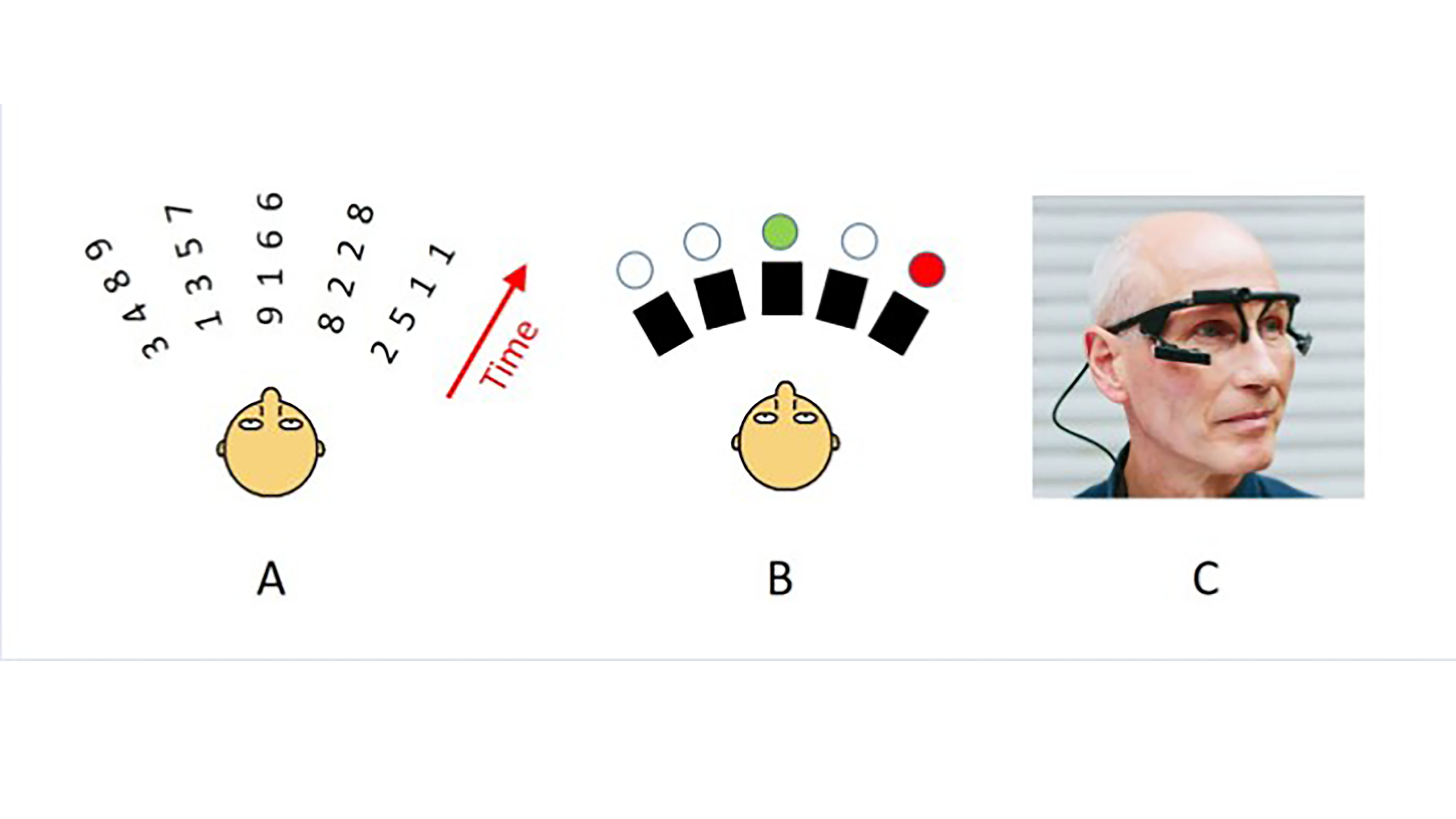

How Does Eye Position Affect ‘Cocktail Party’ Listening?

Several acoustic studies have shown that the position of your eyes determines where your visual spatial attention is directed, which automatically influences your auditory spatial attention. Researchers are currently exploring its impact on speech intelligibility. During the 179th ASA Meeting, Virginia Best will describe her work to determine whether there is a measurable effect of eye position within cocktail party listening situations.

Acoustics Virtually Everywhere: 25 Scientists Summarize Research They’re Presenting This Week at ASA’s December Meeting

As part of the 179th ASA Meeting, 25 sound scientists summarize their innovative research into 300-500 words for a general audience and provide helpful video, photos, and audio. These lay language papers are written for everyone, not just the scientific community. Acousticians are doing important work to make hospitals quieter, map the global seafloor, translate musical notes into emotion, and understand how the human voice changes with age.

Save-the-Date: Virtual Scientific Meeting on Sound, Dec. 7-11

The Acoustical Society of America will hold its 179th meeting Dec. 7-11. To ensure the safety of attendees, volunteers, and ASA staff, the December meeting, “Acoustics Virtually Everywhere,” will be hosted entirely online. The conference brings together interdisciplinary groups of scientists spanning physics, medicine, music, psychology, architecture, and engineering to discuss their latest research — including research related to COVID-19.

International Year of Sound Virtual Speaker Series Focuses on Tone of Your Voice

The Acoustical Society of America continues its series of virtual talks featuring acoustical experts as part of the International Year of Sound celebration. For the third presentation in the series, Nicole Holliday, an assistant professor of linguistics at the University of Pennsylvania, will examine how our voices convey meaning in their tone and what listeners perceive. Specifically, her virtual talk on Aug. 20 will reflect on what language can tell us about identity and inequality.

McGovern Medical School students step up to relieve burdens for front line physicians

Just a few weeks ago, some sounds like “s” and “th” were difficult to pronounce for 6-year-old Owen McKay, who was diagnosed with an articulation disorder when he was 4 years old. Now, he can say them both well, thanks to his daily tutoring sessions with a McGovern Medical School student.

What are You Looking At? ‘Virtual’ Communication in the Age of Social Distancing

When discussions occur face-to-face, people know where their conversational partner is looking and vice versa. With “virtual” communication due to COVID-19 and the expansive use of mobile and video devices, now more than ever, it’s important to understand how these technologies impact communication. Where do people focus their attention? The eyes, mouth, the whole face? And how do they encode conversation? A first-of-its-kind study set out to determine whether being observed affects people’s behavior during online communication.

Finding Meaning in ‘Rick and Morty,’ One Burp at a Time

One of the first things viewers of “Rick and Morty” might notice about Rick is his penchant for punctuating his speech with burps. Brooke Kidner has analyzed the frequency and acoustics of belching while speaking, and by zeroing in on the specific pitches and sound qualities of a midspeech burp, aims to find what latent linguistic meaning might be found in the little-studied gastrointestinal grumbles. Kidner will present her findings at the 178th ASA Meeting.